Six Data Shifts That Will Define Enterprise AI in 2026

$19B in M&A consolidation signals data infrastructure is the new AI moat. What decision makers need to know about vendor dynamics, build vs buy, and strategic timing.

The model wars are over. The data wars have begun.

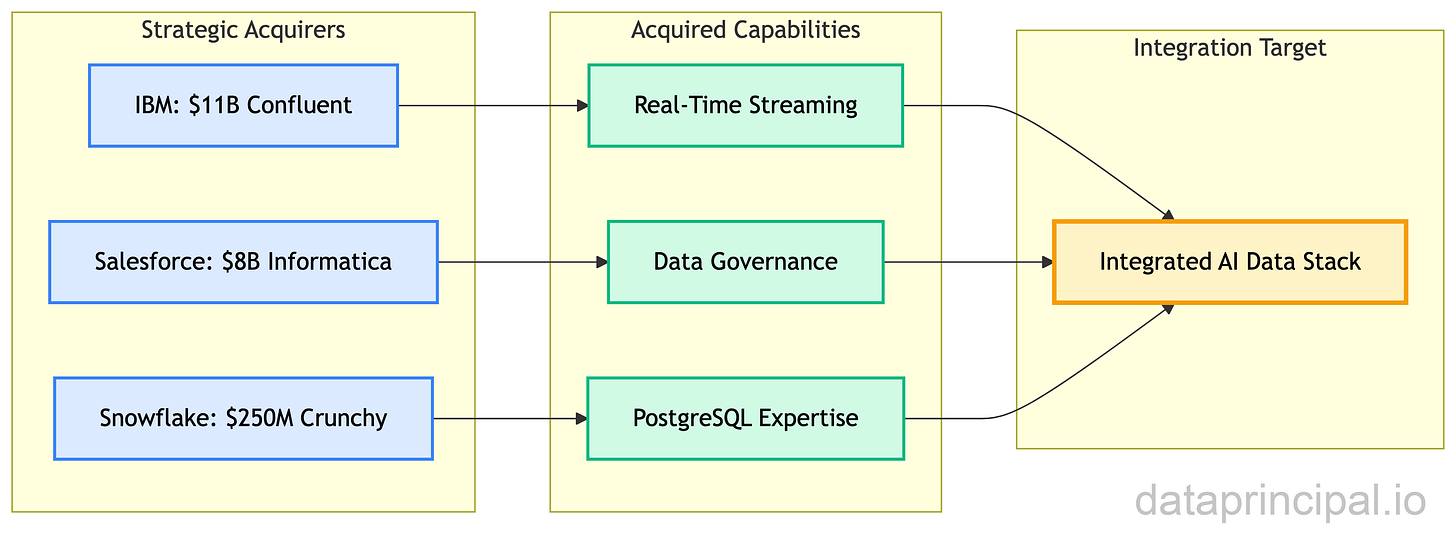

While the industry obsessed over GPT-5 and Gemini 3, something more consequential happened: over $19 billion in major data infrastructure deals since late 2024. IBM acquired Confluent for $11 billion. Salesforce bought Informatica for $8 billion. Snowflake picked up Crunchy Data for $250 million. Supabase raised $200 million at a $2 billion valuation.

The message is clear: data infrastructure is the new competitive moat. Not models... Every company has access to the same LLMs through ChatGPT, Claude, and Gemini. What differentiates enterprises is the data they can feed those models, the infrastructure that moves that data, and the governance that makes it trustworthy.

Why Model Access Isn’t Your Competitive Advantage

Here’s what the commodity AI argument means for your strategy:

What you have as ChatGPT, your competitors also have. The API costs the same. The model performs identically. There is no competitive advantage in model access.

The advantage comes from proprietary data fed into that model. It comes from infrastructure that retrieves the right context at the right time. It comes from governance that ensures your AI doesn’t hallucinate customer financials or expose PII.

Yet most enterprise data infrastructure wasn’t designed for AI workloads:

Traditional data warehouses weren’t designed for AI workloads. The data infrastructure you’ve invested in (Snowflake, BigQuery, Oracle) can’t power AI search and retrieval without significant extensions or replacements. Your existing investments may not leverage for AI.

Adding AI capabilities means adding vendors. Specialized AI databases require new vendor contracts, new operational expertise, new backup strategies, new compliance reviews. What should be a capability extension becomes a platform expansion with all the associated costs and risks.

Cloud provider managed services add vendor lock-in. AWS Bedrock Knowledge Base and Azure AI Search simplify operations but tie you to specific ecosystems at a moment when multi-cloud strategies are essential.

The cost of inaction is measurable. IBM’s 2023 Global AI Adoption Index shows 42% of enterprise-scale organizations already have AI actively deployed in production—not proof-of-concept, but production AI integrated into core business processes. Organizations without a mature data infrastructure will hit the wall: hallucinations from poor retrieval, latency from fragmented systems, and compliance failures from ungoverned data flows.

The $19+ billion in M&A isn’t random acquisition activity. It’s enterprises scrambling to build the data foundations that AI applications demand.

Six Infrastructure Shifts Reshaping Enterprise AI

Six shifts are reshaping enterprise data infrastructure for the AI era. Understanding them lets you make strategic bets rather than tactical scrambles.

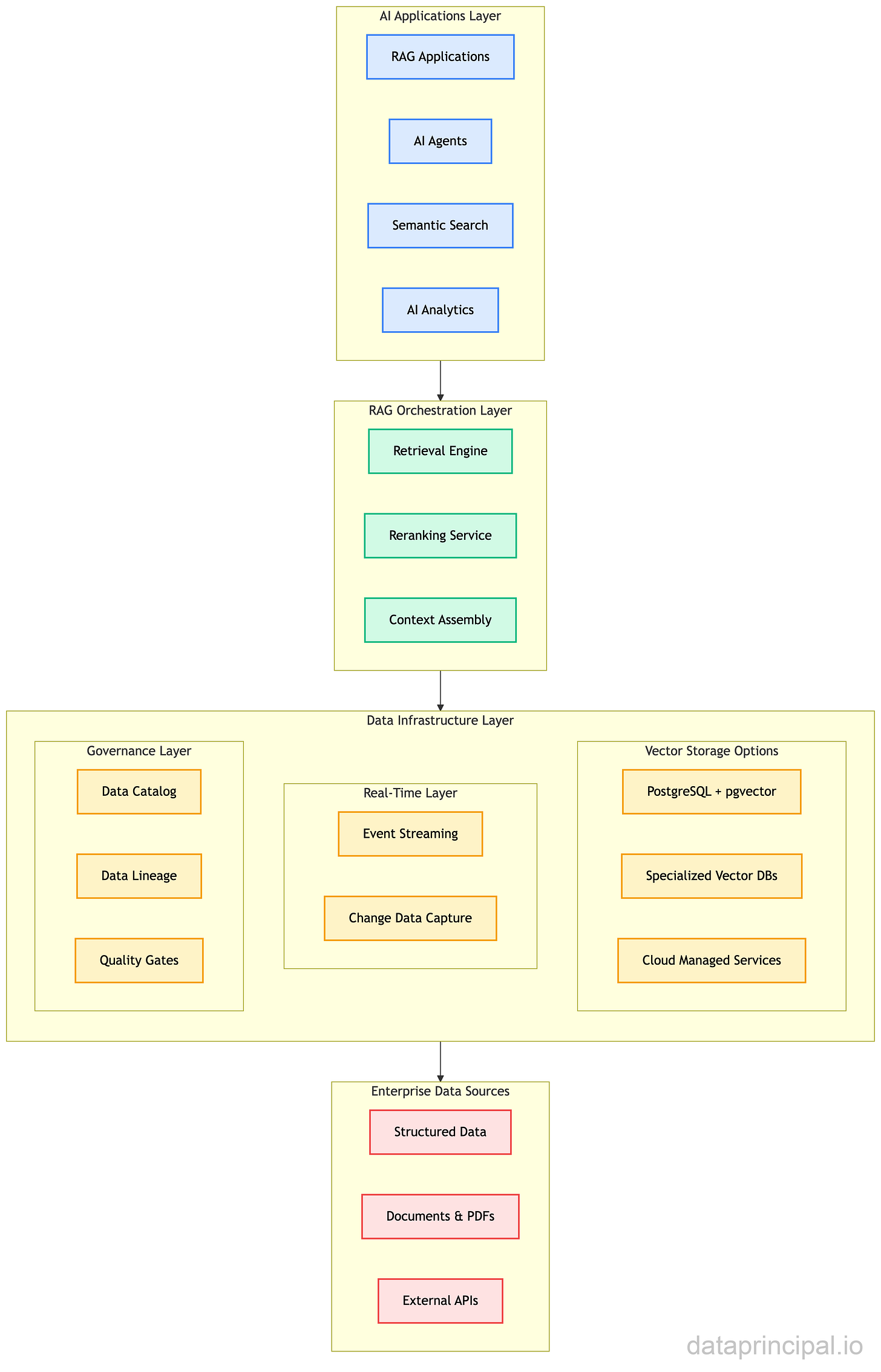

Reference Architecture: Enterprise AI Data Infrastructure

Component Overview

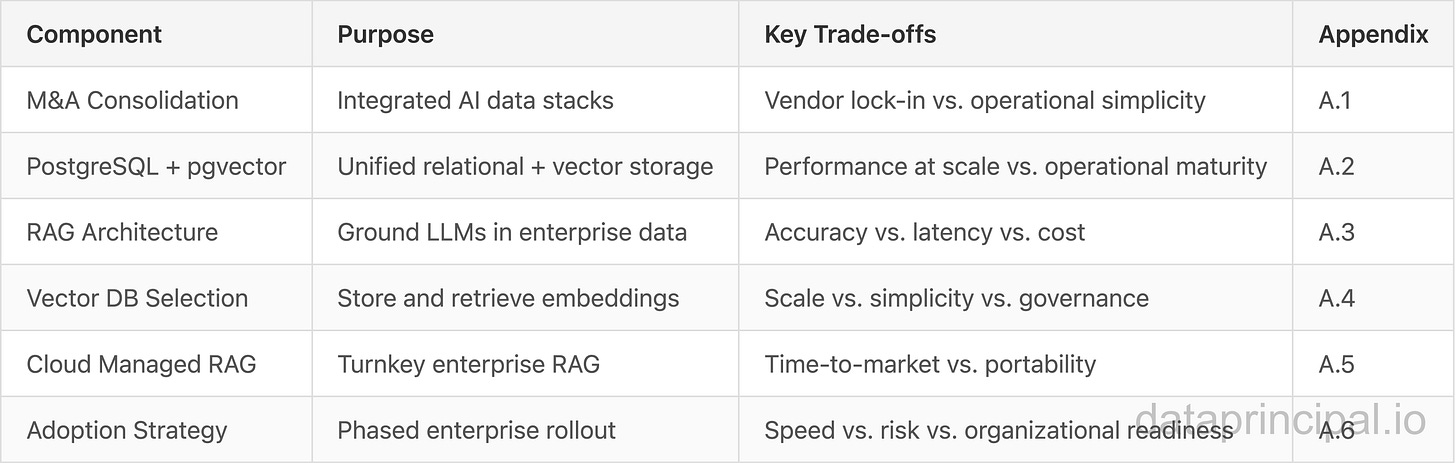

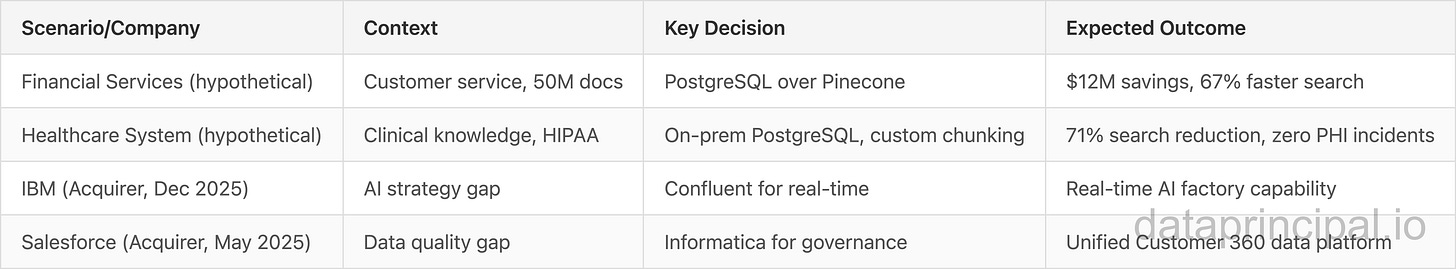

Shift 1: M&A Consolidation Wave. The $19+ billion in acquisitions since late 2024 signals that major players are building integrated AI data stacks. IBM’s Confluent acquisition (December 2025) brings real-time streaming to its AI portfolio. Salesforce’s Informatica purchase (May 2025) adds data governance to its CRM AI. Snowflake’s Crunchy Data deal (June 2025) brings PostgreSQL expertise in-house. This consolidation reduces vendor fragmentation but requires strategic vendor selection.

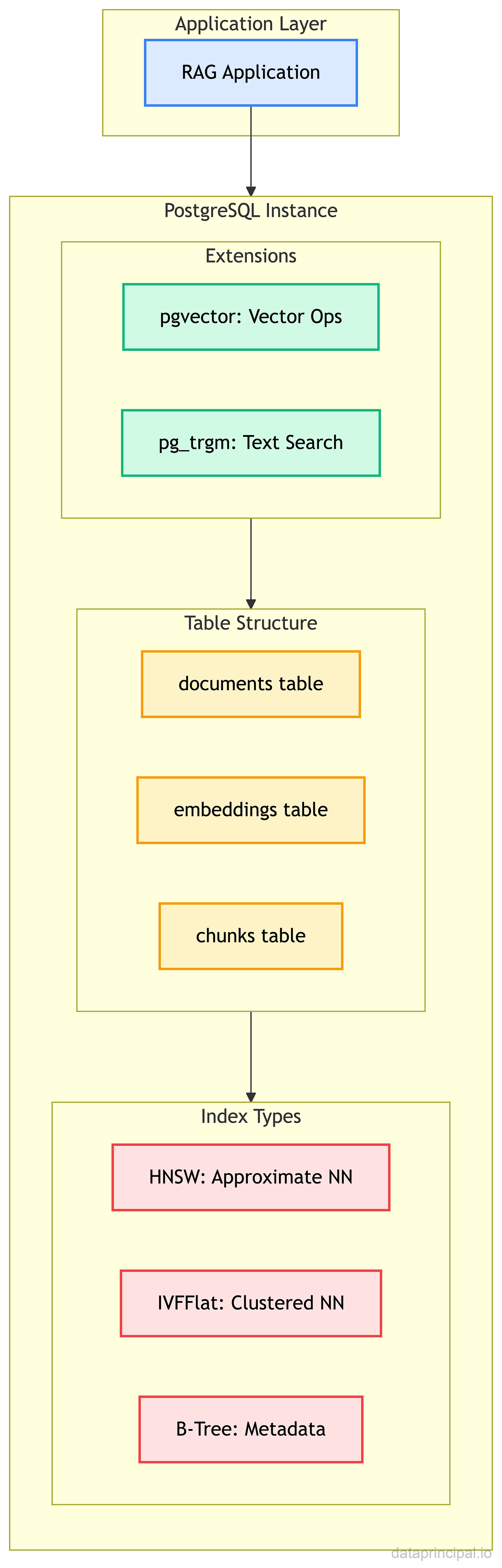

Shift 2: PostgreSQL Renaissance. PostgreSQL extensions for AI can match or beat specialized databases at a fraction of the cost. For enterprises already running PostgreSQL, adding AI capabilities requires no new infrastructure, no new operational expertise, no additional vendor contracts. The “boring database” becomes the strategic choice. (Technical benchmarks: Appendix A.2)

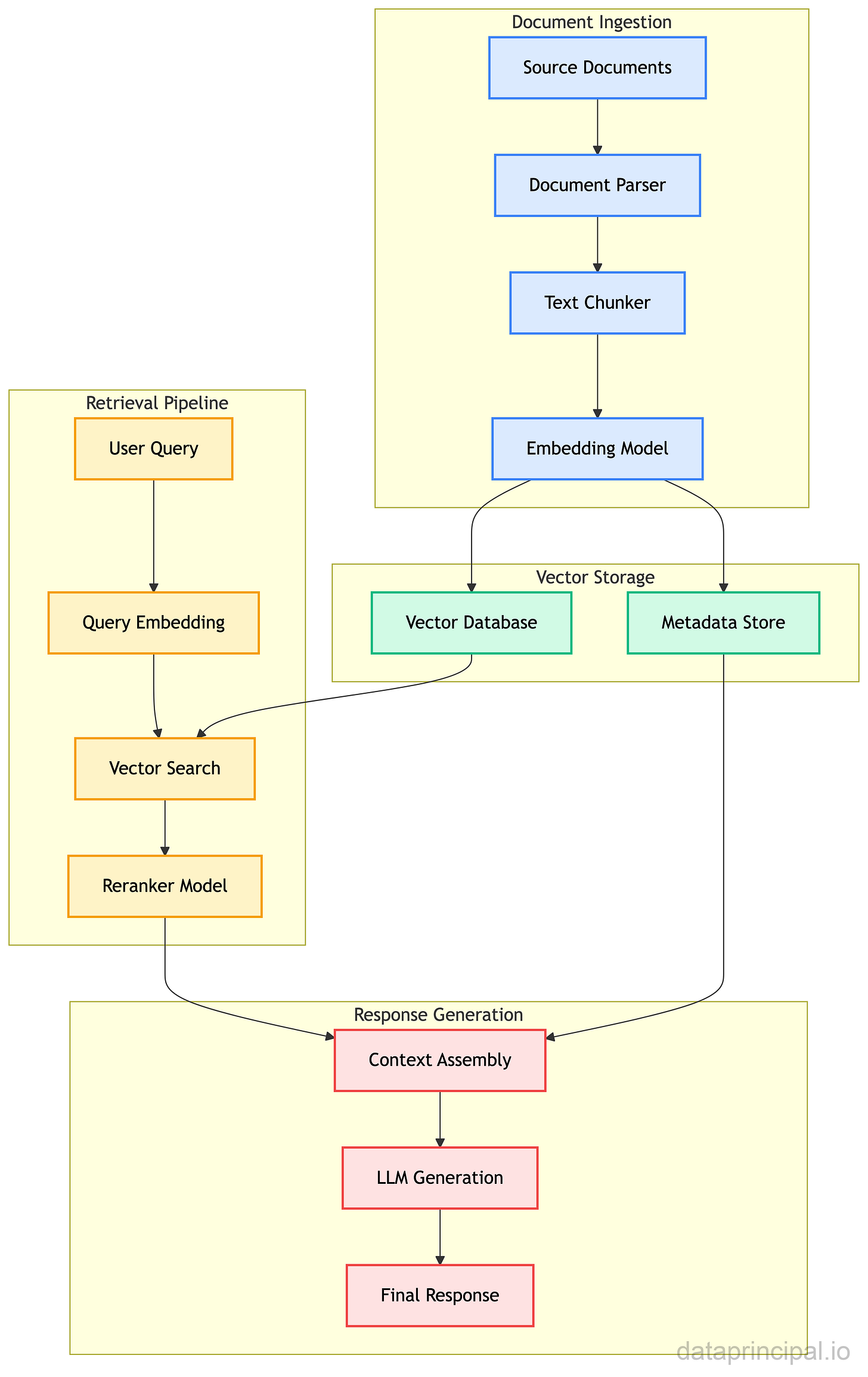

Shift 3: RAG Fundamentals & Evolution. RAG systems ground your AI models in proprietary data, making them accurate and reliable for enterprise use. The architecture is evolving from simple retrieval to multi-step reasoning and autonomous decision-making. (Architecture details: Appendix A.3)

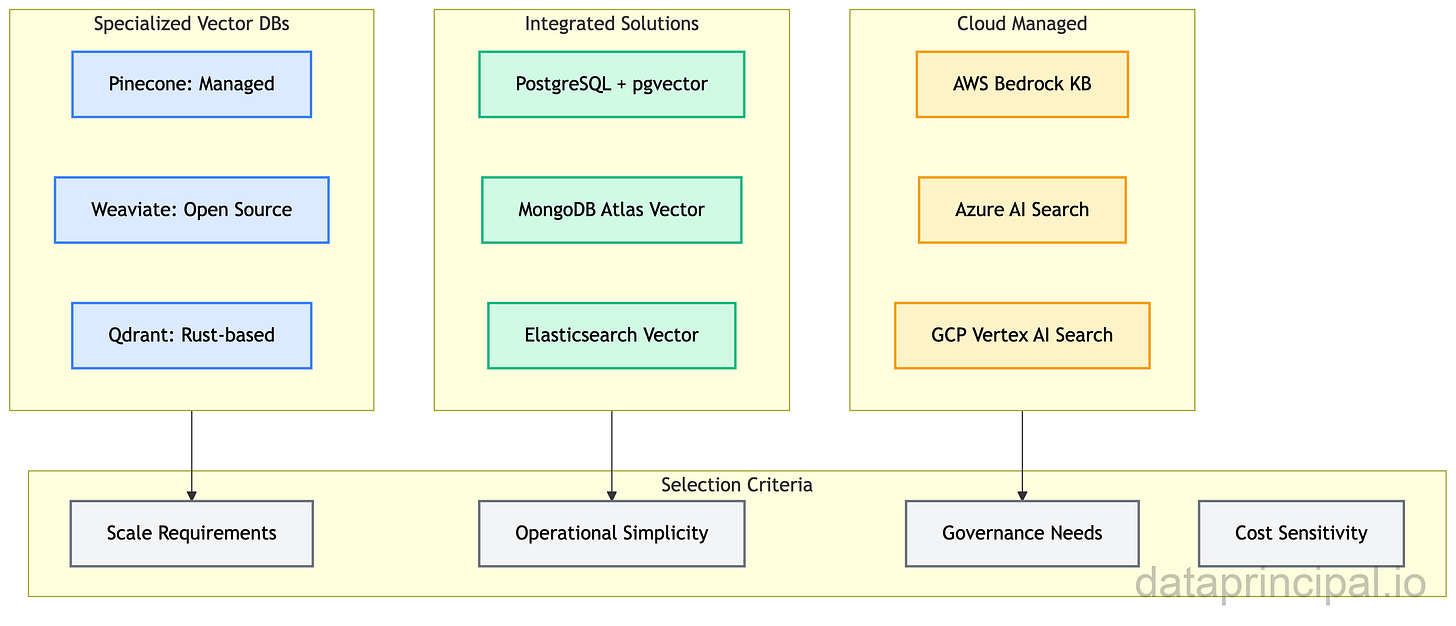

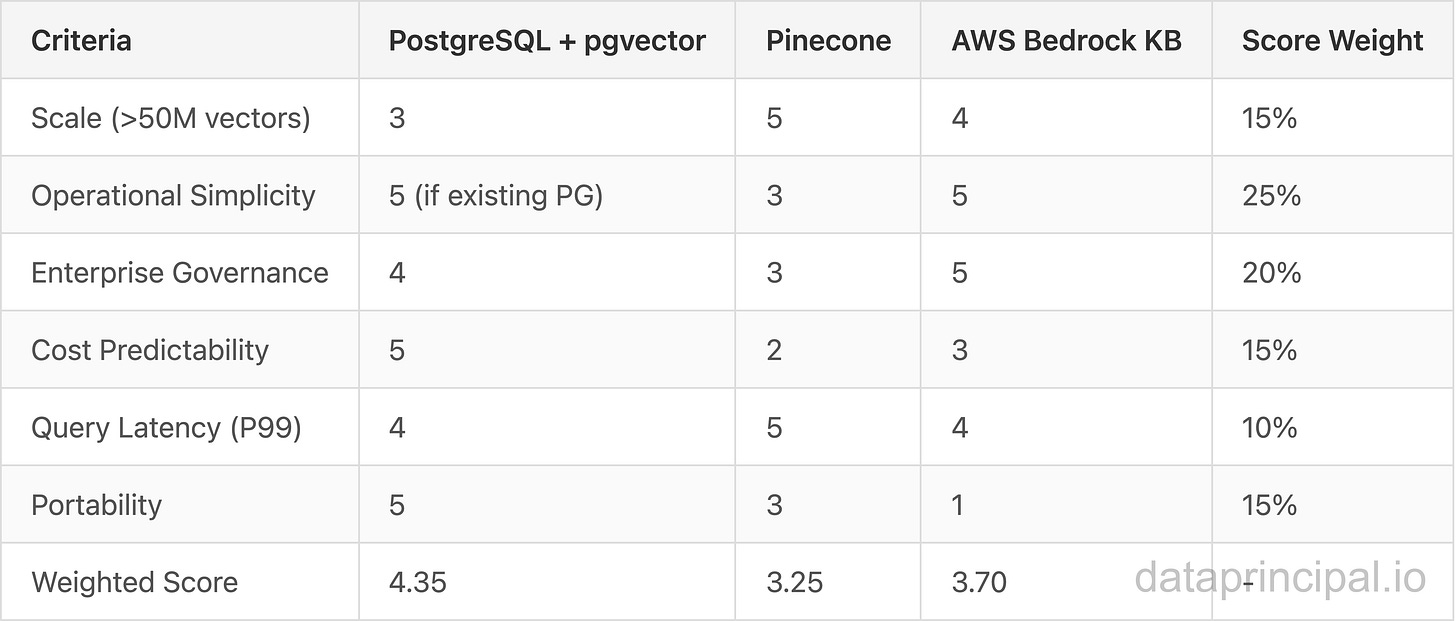

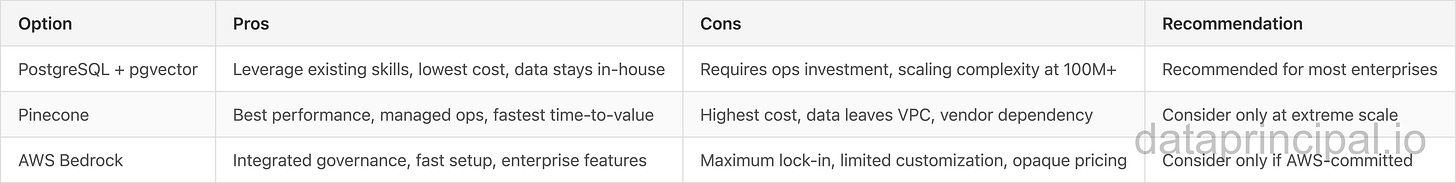

Shift 4: Vector Database Market Dynamics. The market is bifurcating: specialized databases (Pinecone, Weaviate) for extreme scale, integrated solutions (PostgreSQL) for operational simplicity, and cloud-managed services (AWS, Azure) for enterprise governance. Each serves different use cases with different trade-offs.

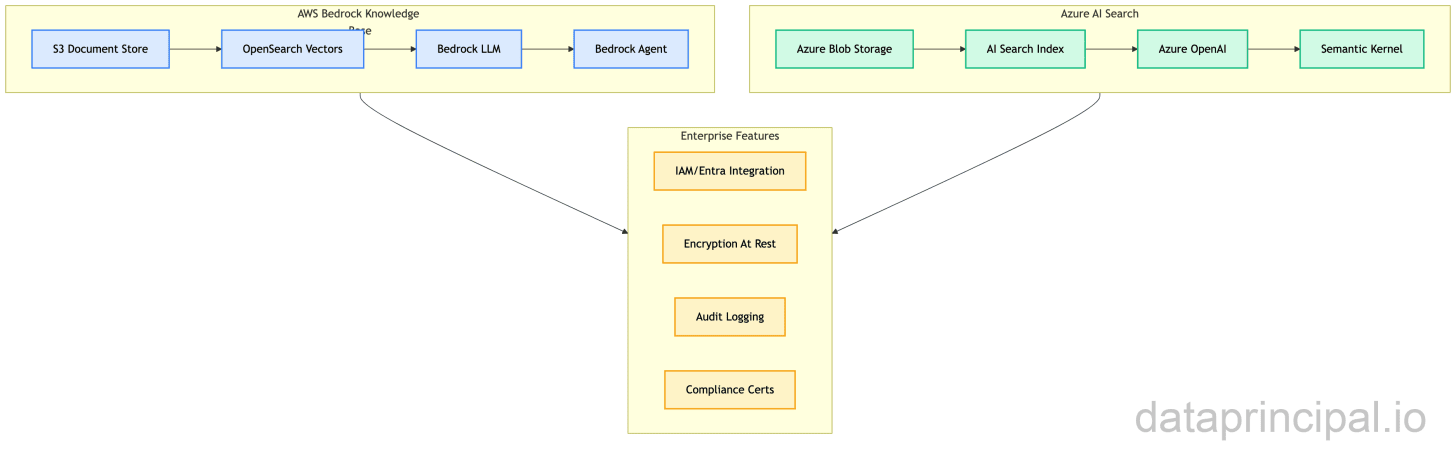

Shift 5: Cloud Provider Managed RAG. AWS Bedrock Knowledge Base and Azure AI Search offer turnkey RAG with enterprise governance, but at the cost of cloud lock-in. For organizations committed to a single cloud, these services reduce time-to-production significantly.

Shift 6: Enterprise Adoption Trajectory. With 42% of enterprises already deploying AI as of 2023 (per IBM’s Global AI Adoption Index), adoption continues to accelerate, driven by customer service automation, internal knowledge management, and document processing. The use cases are narrower than the hype suggests, but the ROI is increasingly proven.

Component Summary

Scenario: Global Financial Services Firm

Consider a Fortune 100 financial services firm facing a common challenge: their 40,000 customer service agents spend 35% of their time searching internal knowledge bases for answers to customer queries. The existing search is keyword-based, slow, and often returns irrelevant results.

Such a firm might evaluate three approaches:

Pinecone + LangChain: Best retrieval quality, highest cost ($400K/year projected)

AWS Bedrock Knowledge Base: Moderate quality, fastest deployment, AWS lock-in

PostgreSQL + pgvector: Good quality, lowest cost, leverages existing infrastructure

In this scenario, choosing PostgreSQL with pgvector makes sense because:

Already running PostgreSQL Aurora: Zero new infrastructure, existing DBA expertise

Compliance requirements: Data never leaves their VPC, unlike external vector services

Cost structure: $40K/year versus $400K for Pinecone at their scale (50M documents)

Potential results in this scenario:

67% reduction in average search time (18 seconds to 6 seconds)

23% improvement in first-call resolution

$12M annual savings from reduced call handling time

Zero additional infrastructure or vendor contracts

The key insight: trading theoretical best-in-class performance for operational simplicity. The performance advantage of pgvector with pgvectorscale over Pinecone in benchmarks often doesn’t matter—both are fast enough. What matters is operational maturity and cost predictability.

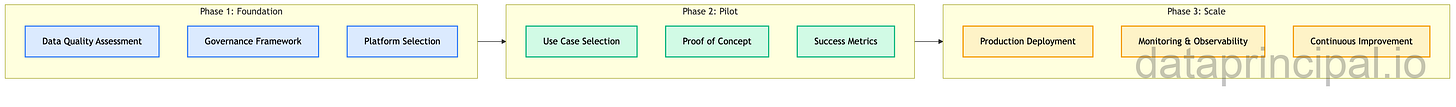

Where to Start: Your Implementation Roadmap

If you’re already running PostgreSQL:

Evaluate extending your current infrastructure with AI capabilities. The business case: leverage existing investments, avoid new vendors, reduce operational complexity. Time to value: weeks, not months.

If you’re committed to a single cloud provider:

Evaluate managed services (AWS Bedrock Knowledge Base, Azure AI Search, GCP Vertex AI Search). The vendor lock-in is real, but so is the operational simplification. Make this choice consciously.

If you have extreme scale requirements:

Evaluate specialized databases designed for AI workloads. The trade-off: best-in-class performance vs. operational overhead and vendor dependencies. Decision framework: Appendix B.

Regardless of where you are:

Build your data governance foundation first. RAG systems inherit the quality of the data they retrieve. If your source documents are outdated, inconsistent, or poorly structured, your AI will be too. Invest in data quality before investing in AI infrastructure.

The data infrastructure decisions you make in 2026 will determine your AI capabilities for the next decade. The model race is commoditizing. The data race is just beginning.

Email me: can [at] dataprincipal.io or connect on LinkedIn.

Dictionary

ADR: Architecture Decision Record. Document capturing significant architectural choices

BM25: Best Matching 25. Probabilistic ranking algorithm for keyword-based text search

CDC: Change Data Capture. Pattern for tracking and propagating database changes in real-time

Chunking: Splitting documents into smaller segments for embedding and retrieval

Context Window: Maximum tokens an LLM can process in a single request

Embeddings: Numerical representations of text/data as vectors, capturing semantic meaning

Hallucination: When an LLM generates plausible but factually incorrect information

HNSW: Hierarchical Navigable Small World. Graph-based index for approximate nearest neighbor search

Hybrid Search: Combining vector similarity search with keyword (BM25) search for better recall

IVFFlat: Inverted File Flat. Clustering-based index for vector similarity search

LLM: Large Language Model. AI models like GPT-4, Claude, Gemini trained on massive text datasets

MRR: Mean Reciprocal Rank. Metric measuring how high relevant results appear in rankings

NDCG: Normalized Discounted Cumulative Gain. Metric for ranking quality evaluation

P99 Latency: 99th percentile response time. Represents worst-case user experience

pgvector: PostgreSQL extension enabling vector similarity search within standard PostgreSQL

PII: Personally Identifiable Information. Data that can identify an individual

RAG: Retrieval-Augmented Generation. Architecture pattern that grounds LLM responses in retrieved documents

Reranking: Second-stage retrieval that re-scores initial results using more sophisticated models

Vector Database: Database optimized for storing and querying high-dimensional vectors (embeddings)

VPC: Virtual Private Cloud. Isolated network environment within cloud infrastructure

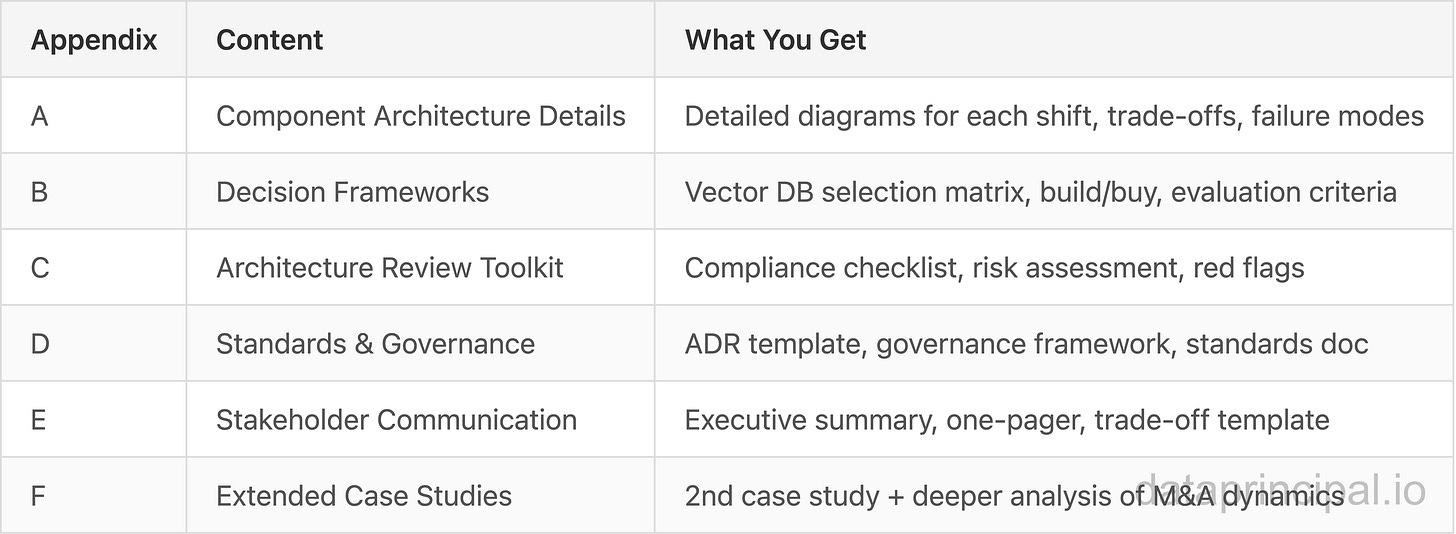

What’s in the Appendix

For principals who want to build, validate, or get a second opinion:

Starting from 1 April 2026, the following section of this post will be available to members only. This article is currently open for preview so you can decide whether you would like to purchase a membership in the future.

Appendix A: Component Architecture Details

A.1 M&A Consolidation Impact

Trade-offs:

Consolidation vs. Best-of-Breed: Integrated stacks reduce operational complexity but may sacrifice specialized performance

Vendor Lock-in vs. Speed: Acquired capabilities become deeply integrated, making future migrations costly

Investment Protection: Organizations invested in acquired companies (Confluent users, Informatica customers) face uncertain roadmaps

Failure Modes:

Integration stalls: Acquisitions take 18-36 months to fully integrate; expect feature gaps

Pricing changes: Acquirers often rationalize pricing upward post-acquisition

Talent exodus: Key engineers from acquired companies often leave within 24 months

Strategic Implications:

Evaluate vendor acquisition risk in all new data infrastructure purchases

Build abstraction layers where possible to reduce switching costs

Monitor integration roadmaps closely for acquired products you depend on

A.2 PostgreSQL + pgvector Architecture

Trade-offs:

HNSW vs. IVFFlat Index:

HNSW: Faster queries, more memory, slower builds

IVFFlat: Lower memory, faster builds, requires tuning

Embedded vs. Separate: Storing vectors in same table as documents simplifies joins but increases row size

Dimension trade-off: Higher dimensions (1536 vs. 768) improve accuracy but increase storage and query time

Failure Modes:

Index not used: PostgreSQL may choose a sequential scan if statistics are stale (run ANALYZE)

Memory exhaustion: HNSW indexes are memory-resident; large indexes can OOM

Dimension mismatch: Embedding model change requires re-indexing all vectors

Scaling Considerations:

Horizontal: Use

pg_partmanfor table partitioning; Citus for distributed PostgreSQLVertical: pgvector is CPU-bound; more cores help more than RAM beyond index size

Practical limit: 50-100M vectors per instance before considering sharding

A.3 RAG Architecture Components

Trade-offs:

Chunk Size: Smaller chunks (256 tokens) = better precision, more retrieval calls; Larger chunks (1024 tokens) = more context per retrieval, potential noise

Hybrid Search: Combining vector + keyword improves recall but adds latency (~20ms)

Reranking: Cross-encoder reranking improves precision significantly but adds 50-200ms latency

Failure Modes:

Context window overflow: Retrieved chunks exceed LLM context limit; implement token counting

Embedding drift: Model updates change vector space; requires re-embedding corpus

Staleness: Documents updated but embeddings not refreshed; implement CDC-triggered re-embedding

Agentic RAG Extension:

Multi-step reasoning: Agent decides whether to retrieve more context

Tool use: Agent can query structured data, APIs, or perform calculations

Query decomposition: Complex queries split into sub-queries for better retrieval

A.4 Vector Database Selection

Trade-offs:

Specialized DBs: Best performance at extreme scale, highest operational overhead, vendor-specific expertise required

Integrated Solutions: Leverages existing skills, lower performance ceiling, simpler operations

Cloud Managed: Fastest time-to-production, highest lock-in, enterprise governance built-in

Failure Modes:

Pinecone outages: Managed service outages impact all dependent applications; no fallback

pgvector scaling wall: Beyond 100M vectors, query latency degrades without a sharding strategy

Cloud egress costs: Moving data out of cloud-managed services can be prohibitively expensive

A.5 Cloud Provider Managed RAG

Trade-offs:

Time-to-Production: Weeks instead of months; pre-integrated with enterprise auth

Portability: Near-zero; migrating to different cloud requires complete rebuild

Cost Transparency: Opaque pricing with multiple metered dimensions (storage, queries, LLM calls)

Failure Modes:

Service quota limits: Rate limits can block production traffic during spikes

Model deprecation: Cloud providers can deprecate LLM versions with limited notice

Region availability: Not all features available in all regions; can complicate data residency

A.6 Enterprise Adoption Strategy

Trade-offs:

Speed vs. Risk: Faster deployment increases failure probability; governance adds latency

Scope vs. Complexity: Broader initial scope increases implementation risk

Internal vs. External Data: Internal data lower regulatory risk but limited training signal

Failure Modes:

Data quality debt: RAG amplifies bad data; garbage in, hallucinations out

Success metric gaming: Teams optimize for retrieval metrics not business outcomes

Shadow AI proliferation: Without platform, teams build one-off solutions

Appendix B: Decision Frameworks

B.1 Vector Database Selection Matrix

Score Key: 1=Poor, 3=Adequate, 5=Excellent

Recommendation by Profile:

Existing PostgreSQL, <50M vectors, cost-sensitive: PostgreSQL + pgvector

Scale-first, budget available, need managed: Pinecone

AWS-committed, enterprise governance critical: AWS Bedrock Knowledge Base

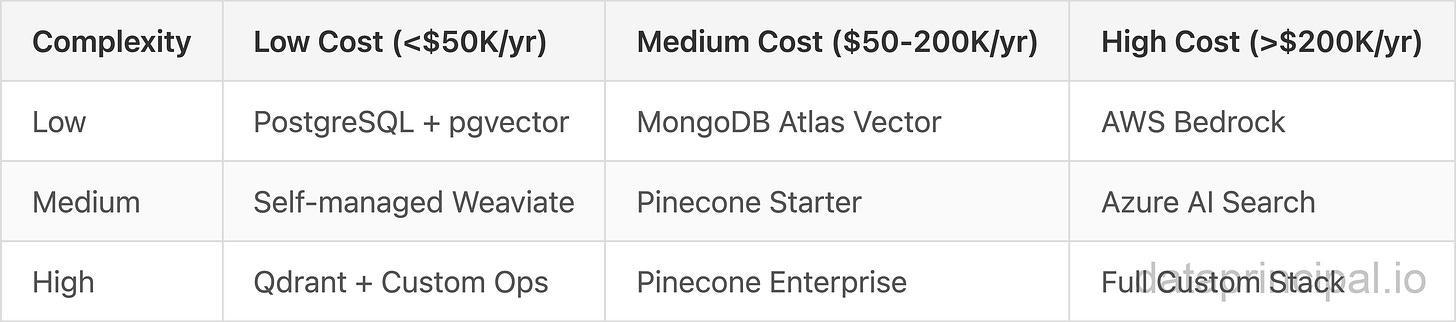

B.2 Build vs Buy Framework

Build (Self-Managed RAG) When:

Existing PostgreSQL infrastructure and expertise

Data residency requirements prevent external services

Cost sensitivity at scale (>$100K/year on managed services)

Need for deep customization (custom rerankers, domain-specific chunking)

Multi-cloud strategy requiring portability

Buy (Managed RAG) When:

Time-to-market is critical (<3 months)

Small team without infrastructure expertise

Enterprise governance requirements (SOC2, HIPAA) need out-of-box compliance

Single-cloud commitment already made

Predictable workload with stable query patterns

Hybrid Approach:

Build core RAG pipeline on PostgreSQL

Use managed LLM APIs (OpenAI, Anthropic) for generation

Implement observability with open-source tools (LangSmith, Arize)

B.3 Cost-Complexity Trade-off Grid

Appendix C: Architecture Review Toolkit

C.1 Architecture Compliance Checklist

Before Implementation:

[ ] Data governance framework documented and approved

[ ] Source document quality assessment completed

[ ] Vector storage solution selected with rationale documented

[ ] Embedding model selected with versioning strategy

[ ] Retrieval quality benchmarks defined (MRR, NDCG targets)

[ ] Latency SLAs defined (P50, P95, P99)

[ ] Cost model validated with projected query volumes

During Implementation:

[ ] Chunking strategy tested with representative documents

[ ] Hybrid search (vector + keyword) evaluated

[ ] Reranking model tested for latency/accuracy trade-off

[ ] Context window limits handled gracefully

[ ] Fallback behavior defined when retrieval fails

[ ] Logging and observability instrumented

Before Production:

[ ] Load testing completed at 2x expected peak

[ ] Failure mode testing completed (DB down, LLM rate limited)

[ ] Data refresh pipeline tested end-to-end

[ ] Rollback procedure documented and tested

[ ] Monitoring dashboards operational

[ ] On-call runbook created

C.2 Questions to Ask Your Team

“What happens when the vector database is unavailable? Do we fail open or closed?”

“How do we know if retrieval quality is degrading? What metrics are we monitoring?”

“How long does it take for a document update to be searchable? What’s our staleness tolerance?”

“What’s our embedding model versioning strategy? Can we A/B test new models?”

“How do we handle documents that exceed our chunk size? Multi-modal content?”

“What’s our data lineage from source document to generated response?”

“How do we detect and handle hallucinations in production?”

“What’s our cost per query? How does it scale with document corpus growth?”

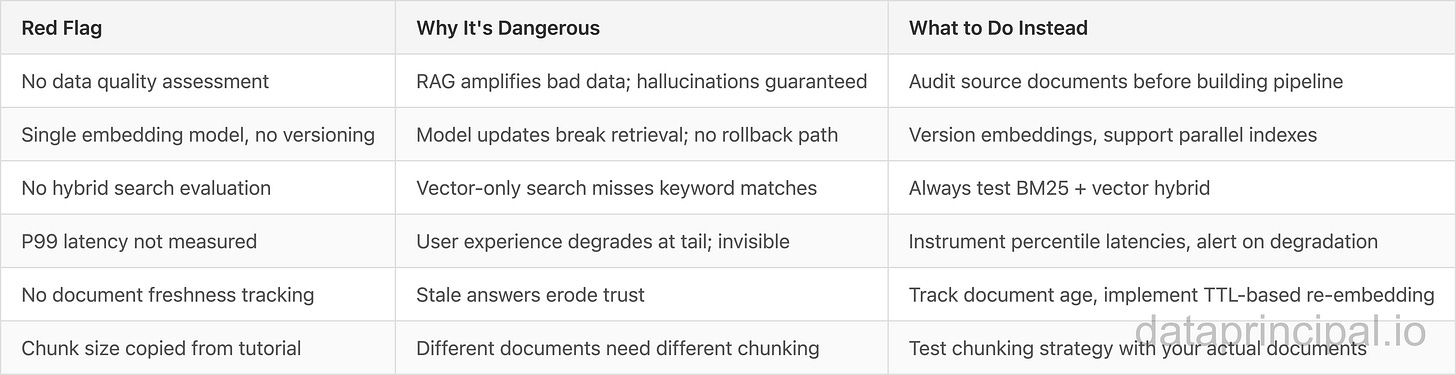

C.3 Red Flags and Anti-Patterns

Appendix D: Standards & Governance Templates

D.1 ADR Template: Vector Database Selection

Status: Proposed

Context:

Our organization needs to implement RAG capabilities for customer service automation. We currently run PostgreSQL Aurora for our primary OLTP workloads. We expect to index 25 million documents initially, growing to 100 million over 3 years. Latency requirements are P99 < 200ms for retrieval.

Decision:

[Your team fills in]

Options considered:

PostgreSQL + pgvector (extend existing infrastructure)

Pinecone (managed vector database)

AWS Bedrock Knowledge Base (managed RAG)

Consequences:

PostgreSQL + pgvector:

Pro: Leverages existing infrastructure and expertise

Pro: No additional vendor contracts

Pro: Data stays in our VPC

Con: Requires internal capacity for operations

Con: May need sharding strategy at 100M scale

Pinecone:

Pro: Best-in-class query performance

Pro: Fully managed operations

Con: Data leaves our control

Con: High cost at scale (~$400K/year at 100M vectors)

AWS Bedrock:

Pro: Integrated with existing AWS footprint

Pro: Enterprise governance built-in

Con: Maximum vendor lock-in

Con: Less flexibility for custom retrieval logic

D.2 Technical Standards Template

Standard Name: RAG-Architecture-Standard

Version: 1.0

Owner: [Principal Engineer / Architect Name]

Effective Date: [Date]

Review Date: [Date + 12 months]

Principles:

All RAG implementations must use approved embedding models from the model registry

Vector storage must support metadata filtering for governance enforcement

Document freshness SLAs must be defined and monitored for each RAG application

Retrieval quality metrics (MRR@10, NDCG) must be tracked and baselined

Required Patterns:

Hybrid search (vector + BM25) for all production implementations

Reranking for high-precision use cases (legal, compliance, financial)

Fallback to keyword search when vector retrieval returns low-confidence results

Document-level access control inherited from source systems

Prohibited Patterns:

Direct LLM API calls without retrieval for factual questions

Single-model embedding without versioning capability

Production RAG without query logging and observability

Chunking strategies that break mid-sentence or mid-paragraph

Appendix E: Stakeholder Communication

E.1 Executive Summary Template

For: C-Suite / VP Engineering

Topic: Enterprise AI Data Infrastructure Strategy 2026

Recommendation: Invest in PostgreSQL-based vector infrastructure to support AI initiatives

Key Points:

Business Impact: AI/ML capabilities are becoming table stakes; 42% of enterprises already have production AI deployed (per IBM’s 2023 index), with adoption accelerating

Strategic Timing: $19+ billion in M&A signals data infrastructure consolidation; decisions made now lock in for 5+ years

Investment Required: $500K-2M for foundation (data quality, vector infrastructure, governance)

ROI Trajectory: Customer service automation alone can deliver 3-5x ROI within 18 months

Risk of Inaction: Competitors with mature data infrastructure will deploy AI applications faster, with better quality, and at lower cost. Gap compounds over time.

E.2 Architecture One-Pager

Problem: Current data infrastructure wasn’t designed for AI workloads. Vector search, real-time retrieval, and LLM integration require new architectural patterns.

Solution: Extend PostgreSQL with pgvector for vector storage, implement RAG architecture for knowledge retrieval, establish data governance foundation.

Proof: A hypothetical Fortune 100 financial services scenario demonstrates potential for 35% reduction in customer service call handling time with PostgreSQL-based RAG, with projected $12M annual savings.

Next Steps:

Q1: Data quality audit of customer-facing knowledge base

Q2: PostgreSQL pgvector POC with customer service use case

Q3: Production deployment with monitoring and feedback loop

Q4: Expand to additional use cases (internal knowledge, document processing)

Investment: $800K Year 1 (infrastructure + team capacity)

E.3 Trade-off Communication Template

Appendix F: Extended Case Studies

F.1 Hypothetical Scenario: Healthcare Knowledge Management

Consider a regional healthcare system (in US) with 12 hospitals and 3,000 physicians that needs to modernize their clinical knowledge base. Physicians might be spending 2+ hours daily searching for treatment protocols, drug interactions, and clinical guidelines across 47 different systems.

Challenge: HIPAA compliance would require all data processing to remain on-premises. Cloud-managed RAG services would not be an option.

Potential Approach:

Deploy PostgreSQL 18 with pgvector on dedicated HIPAA-compliant infrastructure

Implement RAG pipeline with custom medical domain chunking (preserving clinical context)

Integrate with Epic EHR for seamless clinical workflow embedding

Use Claude 3 Haiku via Amazon Bedrock (HIPAA BAA in place) for generation

Key Architecture Decisions:

On-premises vector storage: PostgreSQL pgvector on dedicated hardware (8 vCPU, 64GB RAM)

Custom chunking: Clinical documents chunked by section (History, Assessment, Plan) not by token count

Hybrid search: BM25 for medical terminology + vector for semantic similarity

Audit trail: Every query logged with physician ID, retrieved documents, and generated response

Expected outcomes in this scenario:

71% reduction in clinical search time (average 4.2 minutes to 1.2 minutes per query)

34% improvement in protocol adherence (measured via clinical audits)

Zero PHI exposure incidents (validated by quarterly security audit)

$8.4M annual savings from reduced documentation time

Key Lessons from This Pattern:

Medical domain requires specialized chunking; generic strategies fail

Hybrid search is non-negotiable for medical terminology

Audit trail requirements drive significant architecture decisions

Physician adoption requires extensive UX iteration (expect 10-15 design cycles)

F.2 Deeper Analysis: M&A Strategic Dynamics

Why IBM Bought Confluent ($11B, December 2025):

IBM’s AI strategy lacked real-time data capabilities. Their Watson AI could process batch data but couldn’t react to streaming events. Confluent’s Kafka platform provides:

Real-time feature computation for ML models

Event-driven AI agent triggers

Stream processing for continuous learning

Integration backbone for hybrid cloud

Strategic implication: IBM is building an integrated “AI factory” that ingests, processes, and acts on data in real-time.

Why Salesforce Bought Informatica ($8B, May 2025):

Salesforce’s Customer 360 vision requires unified customer data. Informatica provides:

Data quality for reliable AI training

MDM (Master Data Management) for customer identity resolution

Data governance for regulatory compliance

Integration with 200+ enterprise data sources

Strategic implication: Salesforce is positioning Data Cloud as the “data operating system” for enterprise AI.

Why Snowflake Bought Crunchy Data ($250M, June 2025):

Smaller deal, bigger signal. Snowflake recognized that:

PostgreSQL is becoming the standard for AI applications (pgvector, Supabase)

OLTP + OLAP convergence is accelerating

Enterprise customers want one data platform, not two

Strategic implication: Snowflake is expanding from analytics-only to transactional + analytics, directly competing with PostgreSQL-native vendors.

F.3 Pattern Summary

Common Patterns:

PostgreSQL emerges as strategic choice for data-sensitive enterprises

Custom domain chunking outperforms generic approaches

Hybrid search (vector + keyword) is table stakes, not optional

Data governance foundation precedes AI application success