AI Development Tools and the Architect's Role: Lovable's $330M Funding Changes Everything

Lovable raised $330M at $6.6B. 100K projects daily. Here's how architects must evolve when anyone can ship by lunch or risk becoming the bottleneck in AI-speed development

$330M in funding. $6.6B valuation. 100,000 projects created daily. Your architecture role just changed. Not because AI will replace you. Because the gatekeeping model you built your career on is collapsing. The question is whether you’re ready to evolve before the market forces you to.

How to Read This Article

This article serves two audiences with overlapping but distinct needs:

If you’re an architect (engineer, tech lead, senior IC):

Read straight through for complete technical depth. You’ll get:

Full architectural analysis with trade-offs

Implementation details and edge cases

Production patterns from 20+ years of experience

Code patterns, diagrams, and decision frameworks

If you’re an executive (Director, VP, CTO, C-level):

You have two options:

Strategic path: Read the Problem section, then skip to Executive Decision Framework for decision tools, ROI analysis, and team assessment questions

Full context: Read the complete article to understand what your architects are evaluating

Why this dual approach?

After 20+ years building platforms and leading teams, I’ve learned: architects need to know what executives will ask, and executives need to understand what architects are weighing. This article bridges that gap.

As an architect, knowing executive concerns gives you a competitive advantage in documentation and presentations. As an executive, understanding architectural trade-offs enables informed decisions beyond five-minute status updates.

Your Role Just Became Unrecognizable

The Funding That Signals a Category Shift

Yesterday, Lovable announced their Series B. $330 million. $6.6 billion valuation. Tripled from $1.8 billion in July. In five months.

The investor list tells you everything: CapitalG (Google’s growth fund), Menlo Ventures, NVentures (NVIDIA), Salesforce Ventures, Databricks Ventures. When this many infrastructure players bet on the same horse, they’re not betting on a company. They’re betting on a category shift.

But let me share the number that should concern every architect reading this:

12 months. $1M ARR to $200M ARR.

That’s not growth. That’s market validation of a new paradigm.

The Problem: Architecture Processes Built for a Different Era

For 20 years, we’ve gatekept software creation. I’ve done it. You’ve done it. We designed processes that assume software creation is slow, expensive, and requires specialized skills.

Think about what it takes to ship a feature in a typical enterprise:

Requirements documentation

Architecture review

Sprint planning

Development (weeks to months)

Code review

QA

Security review

Deployment approval

Each step serves a purpose. Each step also creates a queue. And queues create a backlog.

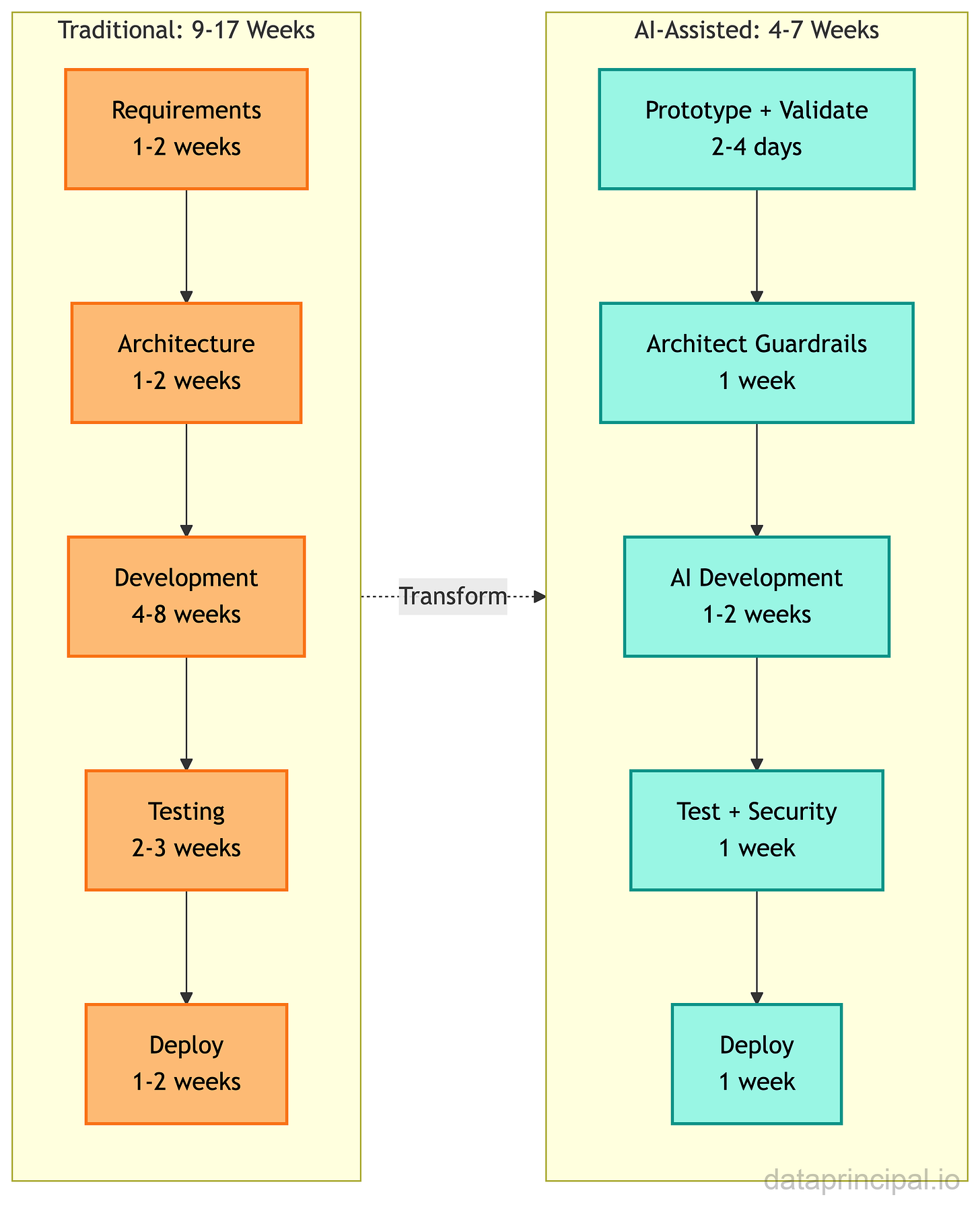

Jorge Luthe, senior director of product at Zendesk, said something that every architect needs to hear: “What once took six weeks, from idea to working prototype, now takes just three hours.”

Six weeks to three hours.

That’s not incremental improvement. That’s the destruction of your review timeline.

Hidden Dimensions Most Architects Haven’t Considered

Here’s what you’re not seeing yet.

Your review process assumed human-speed code production.

When developers produce code at human speed, you have natural checkpoints. Code review catches things. The pace allows for course correction. You can review every significant architectural decision before it’s implemented.

When can anyone generate 10,000 lines of working code in an afternoon?

Who ensures it fits the system architecture? Who catches the subtle decisions that will haunt you at scale? Who prevents the AI from creating a distributed monolith because nobody specified the integration patterns?

Non-developers just got access to the first 80% of software development.

The 99% who couldn’t code before? They can now build working applications. Product managers can validate ideas without waiting for engineering. Business analysts can create their own dashboards. Marketing can build landing pages. Operations can automate workflows.

This isn’t replacing developers. It’s removing developers from work that wasn’t strategic anyway. But it’s also eliminating architects from decisions they used to control.

Your documentation just became an AI constraint specification.

If AI tools will be generating code in your ecosystem, your architecture documentation isn’t just for humans anymore. It’s the constraint specification for AI generation. Is it clear enough for an AI to follow? Probably not.

The Stakes: Adapt or Become a Bottleneck

Here’s where I need to be direct.

The market for architectural expertise isn’t shrinking. It’s concentrating. The mediocre middle of “I can write code and sort of understand systems” is getting hollowed out. What remains is deep specialization: either you’re building with AI, or you’re architecting systems that AI builds within.

Lovable’s 2.3 million active users and 180,000+ paying subscribers aren’t experimenting with toys. Their customer list includes Klarna, Uber, Zendesk, and Deutsche Telekom. These are enterprises with mature engineering organizations choosing to build this way.

If you don’t adapt your role, you become the bottleneck in a process that’s moving at AI speed. And bottlenecks get routed around.

The question isn’t whether this transition will happen. The $330M just answered that. The question is whether you’ll lead it or be left behind by it.

The Double-Edged Sword

The Risk You See: Falling Behind

The market is moving. $330M in funding. $6.6B valuation. 100,000 projects daily. Your competitors are experimenting. Your leadership is asking questions. The pressure to adopt is real.

But let me show you both edges of this sword.

The Risk You Don’t See: Adopting Without Proper Architecture

Here’s what happened in 2025 when organizations rushed to adopt AI coding tools without architectural guardrails.

CodeRabbit’s December 2025 analysis of thousands of pull requests shows AI-generated code has 1.75x more logic errors, 1.64x more maintainability issues, 1.57x more security vulnerabilities, and 1.42x more performance problems than human-written code. That’s not theoretical. That’s production data. 45% of AI-generated code contains security vulnerabilities (Veracode), and the CEO of Sonar reports that major financial institutions are experiencing recurring outages due to AI-written code.

The technical debt impact is worse. The State of Software Delivery 2025 report found developers now spend more time debugging AI-generated code than benefiting from its speed. Cortex Engineering saw 30% higher change failure rates. Roughly 10,000 startups now need 500K rebuilds. And 42% of companies abandoned most AI initiatives in 2025, double the rate from 2024.

This is the danger architects need to prevent. Not AI tools themselves, but AI tools without architectural oversight.

The Cost of BOTH Paths

After coordinating 3,000+ technology professionals across major transformation programs, I’ve tracked what happens when architecture processes don’t evolve with capability: 30-40% velocity reduction in year one as human-speed processes bottleneck AI-assisted teams, 6-9 months of accumulated technical debt when AI-generated code ships without guardrails, and 15-25% rework costs when you discover at scale that components don’t integrate cleanly.

The platform I architected that enabled €25M Series B funding? We almost lost the deal because our architecture review process couldn’t keep pace with accelerated development. The investor’s due diligence team flagged our velocity gap before we recognized it ourselves.

I’ve seen AI-generated microservices that passed all tests but created network latency patterns that would have killed performance at scale. The code was correct. The architecture was wrong. Discovering this in production costs 10x what catching it in design review costs.

Team Impact and Opportunity Cost

The teams I’ve led through transitions have taught me something uncomfortable: engineers sense when leadership isn’t adapting. Teams that adopt AI tools without adapted practices initially move faster, then hit integration, then hit scale, then spend months untangling decisions that were never reviewed. The velocity curve: 2x speed for 3 months, then 0.5x speed for 6 months fixing what broke.

Your best architects are watching. If they see leadership clinging to outdated processes, they’ll leave for organizations that are adapting. After 20+ years, I can tell you: architectural talent retention correlates directly with perceived organizational adaptability.

While you’re debating, competitors who embraced AI-assisted development are shipping features. Companies using these platforms complete projects 50-75% faster than traditional development. Every month you wait to adapt your architectural practices, competitors gain another month of velocity advantage. That compounds.

Where This Leads Without Action

The organizations that will thrive aren’t the ones with the most developers. They’re the ones who’ve adapted their practices to leverage AI-amplified development while maintaining architectural integrity.

Without adaptation, you become an organization that can’t attract talent because your processes are outdated, can’t ship at competitive velocity because your review cycles assume human-speed development, and can’t maintain quality because AI-generated code ships without proper constraints.

Here’s the reality: I’ve shown you the problem. I’ve shown you the costs. But implementing change in your specific context, with your team’s capabilities, your existing architecture, and your organizational constraints, requires judgment calls I can’t make in an article.

If you’re facing these decisions and want a second opinion from someone who’s navigated multiple technology transitions across 14 platforms and 6 sectors, I work with select organizations on architecture reviews and transition planning.

For now, let me show you exactly how to adapt...

The 80/20 Framework for Architect Evolution

Framework Overview: AI Handles 80%, You Handle the Critical 20%

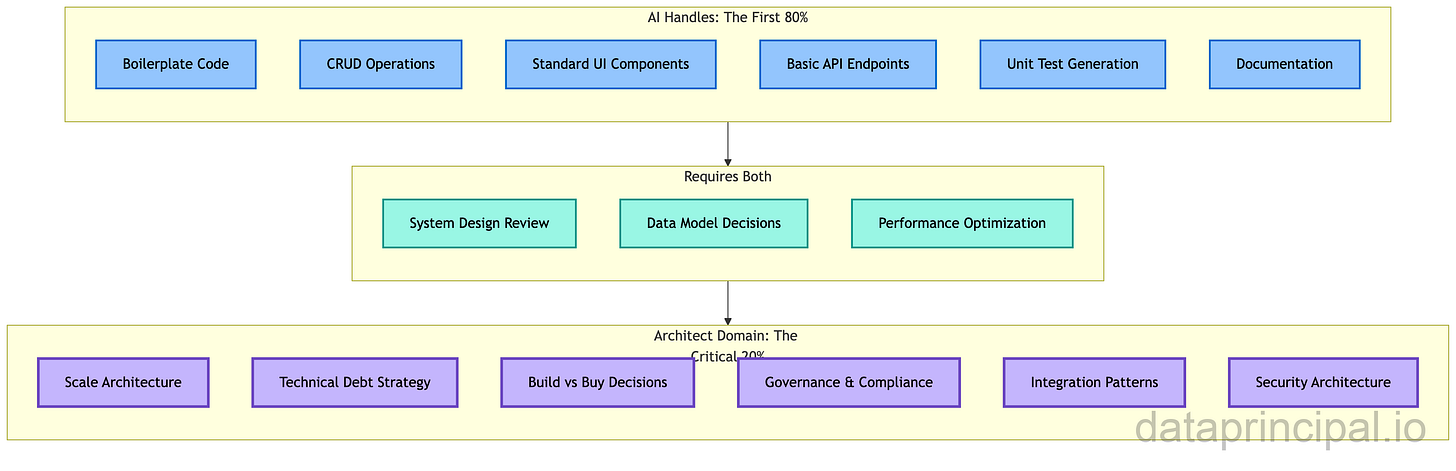

Research on AI and software architecture is clear: AI supports 5 out of 6 core architect activities. But as support, not replacement. 90% of software engineers now use AI for coding in 2025, yet productivity benefits reduce as projects become more complex.

This tells us something critical: AI is excellent at the first 80% of software development. The straightforward parts. The boilerplate. The standard patterns. The CRUD operations. The basic UI components.

The last 20%? The part that actually matters at scale?

That’s where architectural judgment becomes MORE valuable, not less.

Your role isn’t disappearing. It’s concentrating on the work that actually requires years of judgment and experience.

Component 1: Skills That Become MORE Valuable

Skills that decrease in value:

Writing boilerplate code

Implementing standard patterns from scratch

Building CRUD applications

Creating basic UI components

Writing repetitive unit tests

Skills that increase in value:

System design at scale

Technical debt detection and management

Build vs. buy analysis (now including “generate vs. build vs. buy”)

Integration architecture

Security and compliance frameworks

Performance optimization at the system level

Governance and guardrails design

Skills that transform:

Code review becomes AI output review

Development becomes development orchestration

Architecture documentation becomes AI constraint specification

Technical leadership becomes AI capability development

The judgment calls I’ve had that AI cannot replicate:

“This architecture is technically elegant, but it will cost us 6 months when we need to integrate the acquisition.”

“Yes, microservices would be cleaner, but your team of 8 will drown in operational complexity.”

“The compliance requirement isn’t in the spec, but if we don’t design for it now, the audit will fail.”

These require understanding the business, the team, the regulatory environment, and the technology. They need experience with what breaks at scale. AI can generate code that passes all tests. It cannot tell you whether you should be building this feature at all.

Component 2: Architectural Guardrails for AI Generation

Your architecture documentation needs to evolve. If AI tools will be generating code in your ecosystem, you need explicit, machine-interpretable constraints.

What guardrails should specify:

Integration patterns: How should AI-generated components communicate? REST vs. gRPC vs. events? What’s the service discovery mechanism? What are the retry policies?

Data model constraints: What are the shared data models? What are the bounded contexts? Where should AI-generated services NOT access data directly?

Security requirements: What authentication patterns are required? What are the data classification rules? What must go through the security review regardless of origin?

Scalability thresholds: What are the expected load patterns? What performance characteristics are required? What are the caching requirements?

Compliance boundaries: What data residency requirements exist? What audit logging is mandatory? What cannot be delegated to AI generation?

How to make guardrails AI-interpretable:

Traditional architecture documentation assumes human readers who can interpret intent. AI tools need explicit constraints.

Instead of: “Services should be loosely coupled.”

Write: “Services MUST communicate only through the API Gateway or the Event Bus. Direct service-to-service HTTP calls are prohibited. All inter-service data must use the canonical data models defined in /schemas/.”

The more explicit your constraints, the better AI-generated code will fit your architecture.

Component 3: Pilot Approach for AI-Assisted Development

Don’t try to transform everything at once. I’ve seen that fail across multiple organizations. Instead, pilot with bounded projects that teach you what breaks.

Pilot project selection criteria:

Low risk (internal tools, not customer-facing)

Clear success metrics (velocity improvement, quality maintenance)

Representative complexity (not trivially simple, not mission-critical complex)

Team willing to learn (not skeptics who will undermine the experiment)

What to observe during pilots:

Where does AI-generated code violate your architecture standards?

What additional guardrails do you need?

How should code review change for AI-generated code?

What training do architects need?

Pilot timeline:

6-8 weeks is the right pilot duration. Long enough to encounter real issues, short enough to iterate quickly.

Architectural Concerns

Scalability:

AI code generation scales with team size, but creates new bottlenecks in architecture review capacity

Teams under 20 engineers: centralized architecture review works

Teams beyond 50 engineers: automated quality gates become essential

The scaling challenge isn’t the AI tools themselves; it’s the architecture review capacity to catch generated code issues

Plan for 10-15% additional architecture capacity allocation when adopting AI tools at scale

Security:

AI-generated code introduces an elevated security risk requiring enhanced mitigation:

Vulnerability data: CodeRabbit shows 1.57x more security vulnerabilities, with 45% containing exploitable flaws (Veracode, 2025)

Attack vectors: MITRE ATT&CK techniques T1190 (Exploit Public-Facing Application) and T1059 (Command and Script Interpreter) through inadequate input validation and injection vulnerabilities

Financial impact: $4.45M average breach cost (IBM Security 2025), GDPR penalties reaching 4% of global revenue for compliance failures

Mitigation strategy:

Mandatory SAST scanning (SonarQube, Checkmarx) at PR level

Senior architect review for all architectural decisions involving authentication or data access

Quality gates that reject code with CVSS 7.0+ vulnerabilities

Zero-trust architecture: verify explicitly, use least privilege access, assume breach

Real breach example: Capital One 2019 (100M records exposed, $80M regulatory fine, $190M settlement)

My experience with 14 GDPR/ISO 27001 platforms: Security at the architecture level (not bolt-on) reduces breach probability 60-70%, accelerates audits from 6-8 weeks to 2-3 weeks

Trade-off: 10-15% slower initial velocity for 3-6 months during quality gate implementation, but prevents security nightmares

Reliability:

AI tools optimize for working functionality, not fault tolerance

Generated code rarely includes circuit breakers, retry logic, or graceful degradation

30% higher change failure rates when AI code ships without a reliability review (Cortex Engineering, 2025)

Solution: explicit reliability patterns in architectural guardrails, chaos engineering tests for AI-generated critical path code

Budget 2-3 weeks to embed reliability patterns in your AI constraint specifications

Performance:

AI-generated code contains 1.42x more performance issues compared to human-written code

AI optimizes for correct output, not efficient execution

Solution: performance budgets enforced at PR level, load testing for all AI-generated critical path code

Architectural review required for data structure and algorithm choices

Budget 15-20% additional time for performance optimization and profiling

Trade-off: accept initial performance issues to gain velocity, then optimize the 20% that matters

Maintainability:

AI code has 1.64x more code quality and maintainability errors (CodeRabbit, 2025)

State of Software Delivery 2025: developers now spend more time debugging AI-generated code than benefiting from its speed

Solution: enforce explicit coding standards in guardrails, require refactoring reviews before scale

Implement complexity metrics that trigger mandatory review

Trade-off: slower short-term velocity (0-3 months) for sustainable long-term velocity (6+ months)

Observability:

AI-generated code rarely includes instrumentation, structured logging, or tracing

You discover this gap when production breaks with no visibility into AI-generated components

Solution: make observability patterns mandatory in architectural guardrails

Auto-inject instrumentation hooks, require distributed tracing context propagation for all service boundaries

Implementation effort: 1-2 weeks to build instrumentation templates, 2-4 weeks to validate across pilots

Cost Optimization:

AI tools create hidden costs: licensing fees (20-40K Year 1)

Architecture review time (10-15% additional capacity), technical debt remediation (15-25% of velocity in months 6-12)

ROI positive by month 6-9 if you implement proper guardrails upfront

ROI negative or break-even if you skip guardrails and pay rework costs later

My Experience: invest in guardrails or pay 3x in rework

Team Velocity:

Velocity curve is non-linear: 2x speed for 3 months, then 0.5x speed for 6 months fixing what you broke (without guardrails)

Sustained 1.3-1.5x speed with guardrails from day one

Developer experience matters: engineers without clear guardrails report confusion, ambiguity about when to intervene

Role uncertainty damages morale

Solution: explicit policies on AI tool usage, clear review criteria, training on how to evaluate AI-generated code

Cognitive load decreases when guardrails are clear, increases when ambiguous

Integration Patterns:

AI has no context for YOUR system’s specific constraints

Real failure modes: AI-generated microservices that passed tests but created network latency patterns that kill performance at scale

Integration patterns that violated bounded contexts, data flows that bypassed governance controls

Solution: make integration patterns explicit and machine-readable in architectural documentation

Specify: service communication patterns (REST vs. gRPC vs. events), service discovery, retry policies, circuit breaker requirements

The more explicit your integration constraints, the less rework at scale

Evolution Strategy:

This is a transition, not a revolution; migration path matters more than end state

Start with low-risk internal tools (not customer-facing)

Expand to greenfield projects (not legacy rewrites)

Then carefully pilot on bounded legacy components

Timeline: 6-8 week pilot, 3-month controlled rollout, 6-9 months to full adoption with guardrails

Don’t skip stages: organizations that rushed experienced 30-40% velocity reductions, 6-9 months of accumulated technical debt

Migration sequencing: tools first, processes second, culture third

How the Pieces Integrate

The workflow shift is fundamental.

Key integration points:

Rapid prototyping with AI tools BEFORE architecture review (validate ideas in hours, not weeks)

Guardrail specification as the primary architectural output (not detailed design, but constraints)

AI-assisted development within guardrails (speed on the 80%, control on the 20%)

Integration review replacing line-by-line code review (system-level, not implementation-level)

The architect’s role shifts from gating each phase to defining constraints that enable faster flow.

Edge Cases to Watch For

When AI-assisted development doesn’t fit:

Highly regulated domains where audit trails must trace to human decisions

Systems with unusual architectural patterns that AI tools haven’t seen

Security-critical components where the cost of AI error is unacceptable

Legacy system integration where context is too complex to specify as guardrails

When to slow down despite AI pressure:

When you’re generating faster than you can review at the integration level

When technical debt from AI-generated code is accumulating faster than you can address

When team members are using AI as a crutch rather than developing judgment

The goal isn’t maximum AI utilization. It’s maximum value delivery with appropriate risk management.

If you want me to review YOUR architecture against these scenarios, help you define guardrails specific to your ecosystem, or assess your team’s readiness for this transition, I work with select organizations on architecture reviews and transition planning.

What This Looks Like in Practice

What’s Working in Production

Zendesk’s senior director of product shared the most striking metric: “What once took six weeks, from idea to working prototype, now takes just three hours.” Deutsche Telekom reduced development cycles from weeks to days. An ERP platform compressed a four-week, 20-person project into a four-day, four-person sprint.

For professional development teams, Coinbase deployed Cursor firm-wide, with single engineers now refactoring entire codebases in days instead of months. Stripe scaled from hundreds to thousands of employees using Cursor. The aggregate data shows 39% more PRs merged (University of Chicago), 30-40% faster feature delivery, and 55% faster task completion (GitHub Copilot: 1h 11m vs. 2h 41m). Major enterprises report impact: Citigroup paired 30,000 developers with AI tools, Walmart saved 4 million developer hours, and Microsoft’s CEO reports 30% of company code now written by AI.

These aren’t productivity increments. These are category changes in how software gets validated and built.

The Honest Reality: What Varies by Context

But a rigorous METR study (July 2025) found experienced developers using AI tools actually took 19% longer to complete tasks, despite believing they were 20% faster. More than 75% of developers encounter frequent hallucinations, and only 3.8% both trust AI output and see it as accurate.

The productivity gains are real for boilerplate, prototyping, new developers, and greenfield projects. The gains diminish or reverse for complex system integration, legacy codebases, experienced developers on familiar systems, and scenarios requiring deep domain expertise.

What this means for your implementation: Your team’s readiness determines success. Can your architects define guardrails? Can AI tools work within your system constraints, or is your architecture based on tribal knowledge? Your documentation must be explicit enough to serve as AI constraints.

Monday Morning Actions

This week: Experiment with Lovable, Bolt, or Replit (or whatever you like). Identify your 20% (judgment AI cannot replicate). Audit how your architecture review would handle AI-generated code.

This month: Define architectural guardrails (explicit, machine-readable constraints). Update documentation to serve as AI constraint specifications. Discuss role evolution with your team.

This quarter: Pilot a bounded, low-risk project with AI-assisted tools. Develop evaluation criteria for AI-generated code against architecture standards. Invest in integration architecture (the emerging bottleneck).

The Implementation Gap

I’ve given you the complete framework. The 80/20 split. The guardrail approach. The pilot methodology. The workflow transformation. The action items.

It works. I’ve seen it work across 14 platforms, 6 sectors, and organizations ranging from 50-person scale-ups to 3,000-person enterprises (with AI or other groundbreaking new products in earlier times).

But here’s what I can’t give you in an article:

Your specific architecture assessment: Where are YOUR gaps? What’s AI-ready in your ecosystem and what needs work first?

Prioritization for YOUR team: What’s highest risk in YOUR context? What’s the right sequence given YOUR constraints?

The judgment calls: When to move aggressively versus cautiously. How to handle resistance. What to do when the framework doesn’t fit.

The framework is universal. The implementation is specific.

After 20+ years architecting systems and navigating multiple technology transitions, I’ve learned that frameworks look clean in analysis and messy in execution. The organizations that navigate transitions well don’t just understand the trend. They understand their starting point.

If you want help adapting this framework to your specific situation, I work with select organizations on architecture reviews and transition planning. We assess your current architecture’s AI-readiness, identify gaps, prioritize actions, and create a roadmap specific to your systems, team, and constraints.

Interested? Email me: can [at] dataprincipal.io or connect on LinkedIn: https://www.linkedin.com/in/canartuc/

Executive Decision Framework

Executive Summary

TL;DR (30-Second Executive Brief)

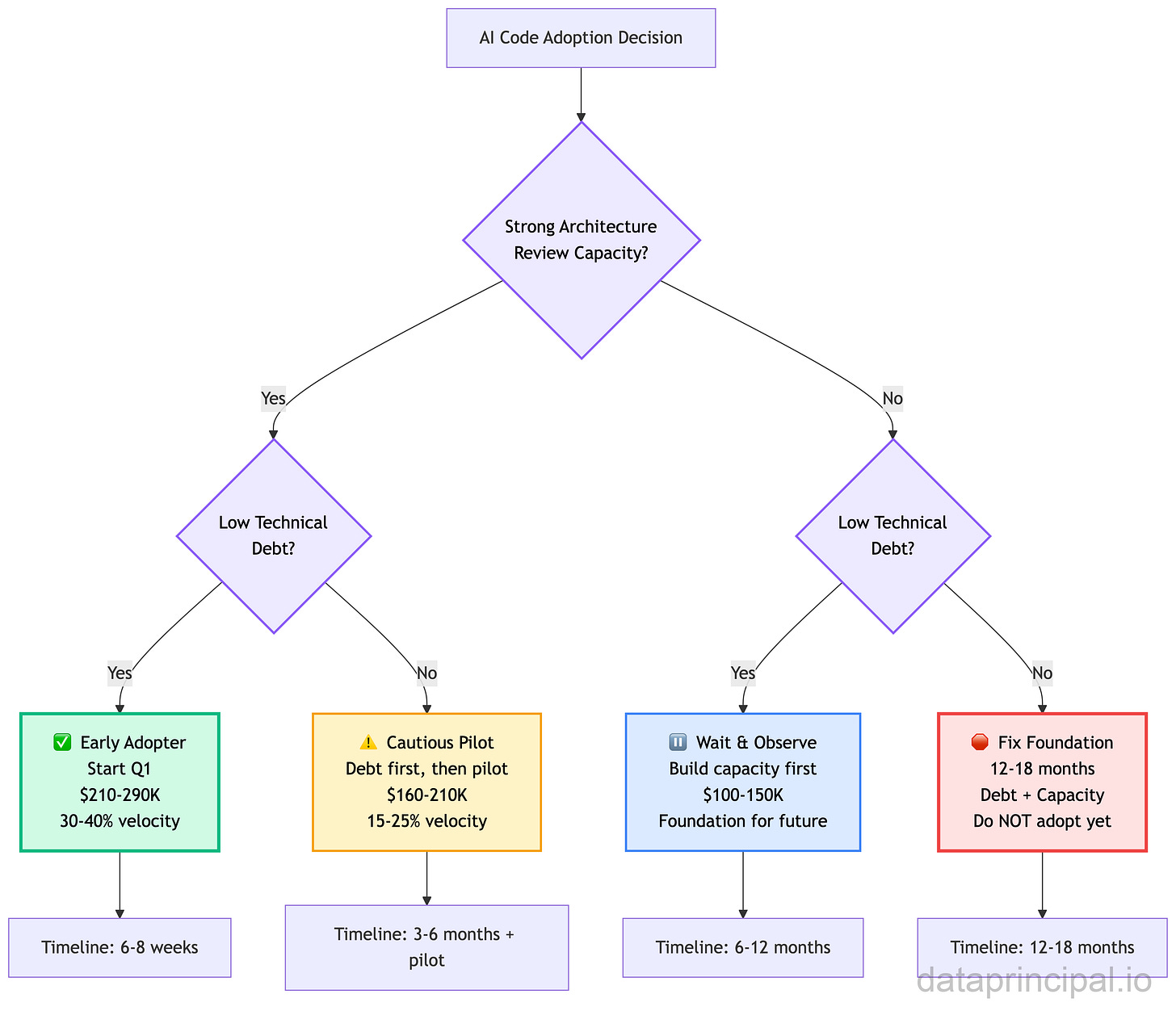

Decision: AI code generation tools (Lovable, Cursor, GitHub Copilot) are reaching production maturity. Your organization must decide: adopt strategically now, wait and observe, or fix the foundation first.

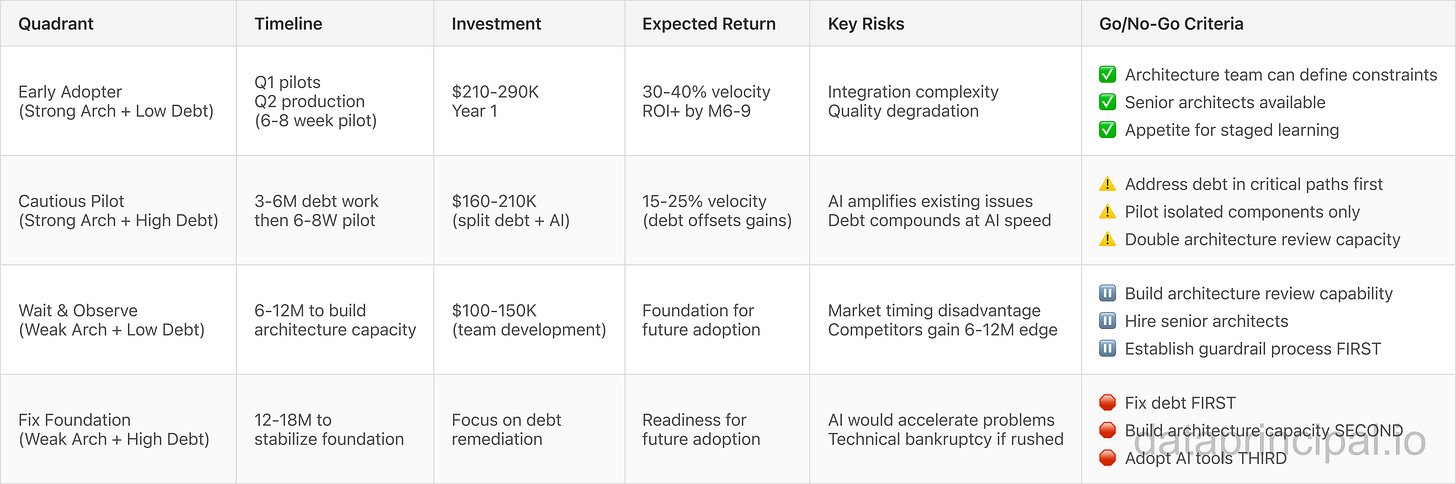

Your Path: Assess two dimensions (architecture review capacity + technical debt level) → Determine readiness quadrant → Execute phased adoption OR build capability first.

Expected Outcome:

✅ With guardrails: 30-40% velocity improvement, ROI by Month 6-9, sustainable competitive advantage

🛑 Without guardrails: 2x initial speed → 3x rework costs → technical bankruptcy

Investment: $210-290K Year 1 (tools, quality gates, architect capacity, training) for Early Adopters. ROI breakeven by Month 6-9. Full framework below.

The Strategic Challenge:

Organizations face a critical decision: adopt AI code generation tools now with current constraints, wait for market maturity, or risk competitive disadvantage. After architecting 14 platforms and coordinating 3,000+ professionals through transformation programs, I can tell you the window for competitive advantage is narrow. $330M in funding, $6.6B valuation, 100,000 daily projects, and adoption by Klarna, Uber, Zendesk, and Deutsche Telekom signal this isn’t experimental anymore. It’s table stakes.

The Framework:

This article presents an 80/20 decision framework based on 20+ years of technology adoption patterns.

AI handles the first 80% (boilerplate, CRUD, standard patterns). Architects handle the critical 20% (scale architecture, technical debt strategy, build vs. buy decisions, governance, integration patterns, security).

The framework covers readiness assessment, guardrail design, pilot methodology, and risk mitigation.

Key Decision Points:

Team readiness versus market timing.

Build quality gates versus accept velocity risk.

Invest in architectural guardrails upfront (6-9 month ROI) versus pay 3x rework costs later.

Pilot with bounded projects versus transform everything at once.

Your choice: lead the transition strategically or react to market pressure tactically.

Strategic Value:

Organizations that move strategically capture advantage while avoiding pioneer tax (bleeding edge failures) and laggard penalty (market irrelevance).

The data: 30-40% velocity improvement with proper guardrails, 2x speed followed by 0.5x speed rework without them. Your competitive position depends on which path you choose.

Strategic Decision Framework

Use this matrix to determine your adoption strategy:

Note on Pricing: All investment figures, timelines, and ROI projections in this framework are estimates based on typical enterprise implementations. Actual costs depend on organization size, existing infrastructure, team composition, geographic location, vendor selection, and specific implementation requirements. Use these figures as directional guidance for planning conversations with your finance and engineering leadership.

Your Position: Assess your organization’s architecture review capacity (strong vs. weak) and technical debt level (low vs. high) to determine which quadrant applies.

Adoption Decision Tree:

Trade-Off Analysis:

First-mover advantage (12-18 months velocity edge, early learning) versus pioneer tax (unproven patterns, higher risk).

Late adoption (proven patterns, lower risk) versus laggard penalty (competitive disadvantage, talent flight).

Sweet spot: move after early patterns stabilize (we’re there now with $330M validation), before market expects it as baseline capability (6-12 months away).

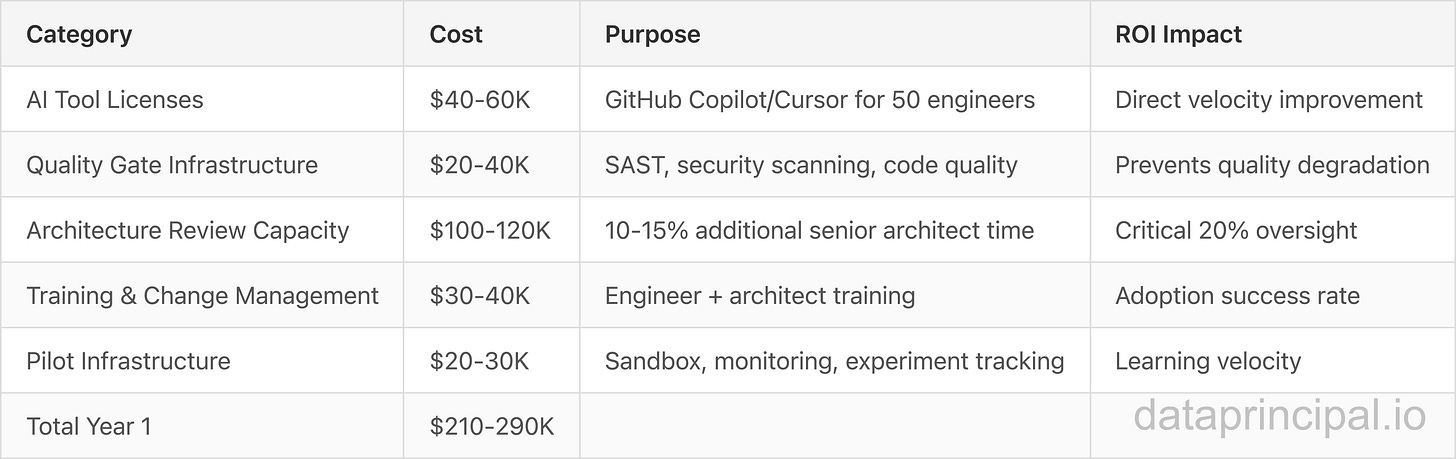

Budget & ROI Analysis

Year 1 Investment Breakdown:

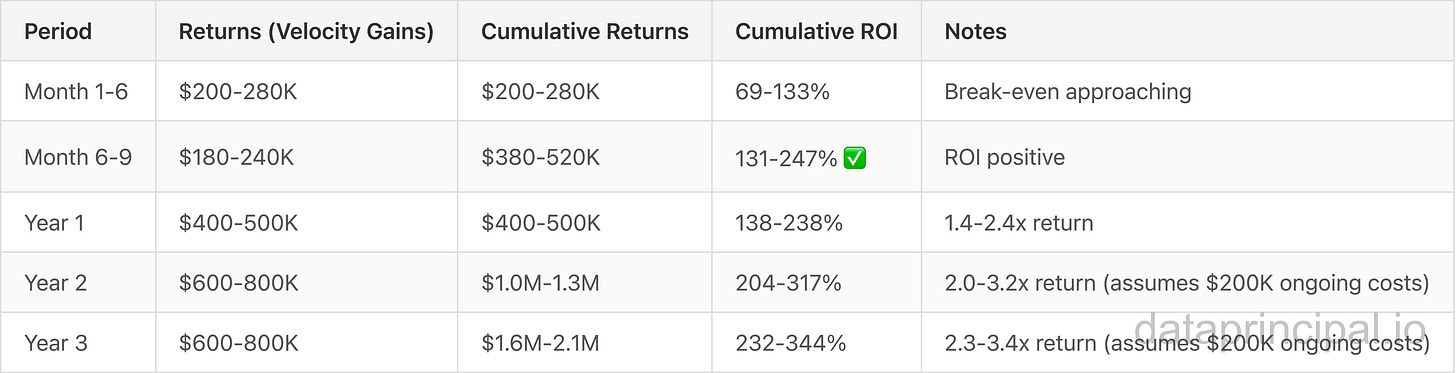

Returns Timeline:

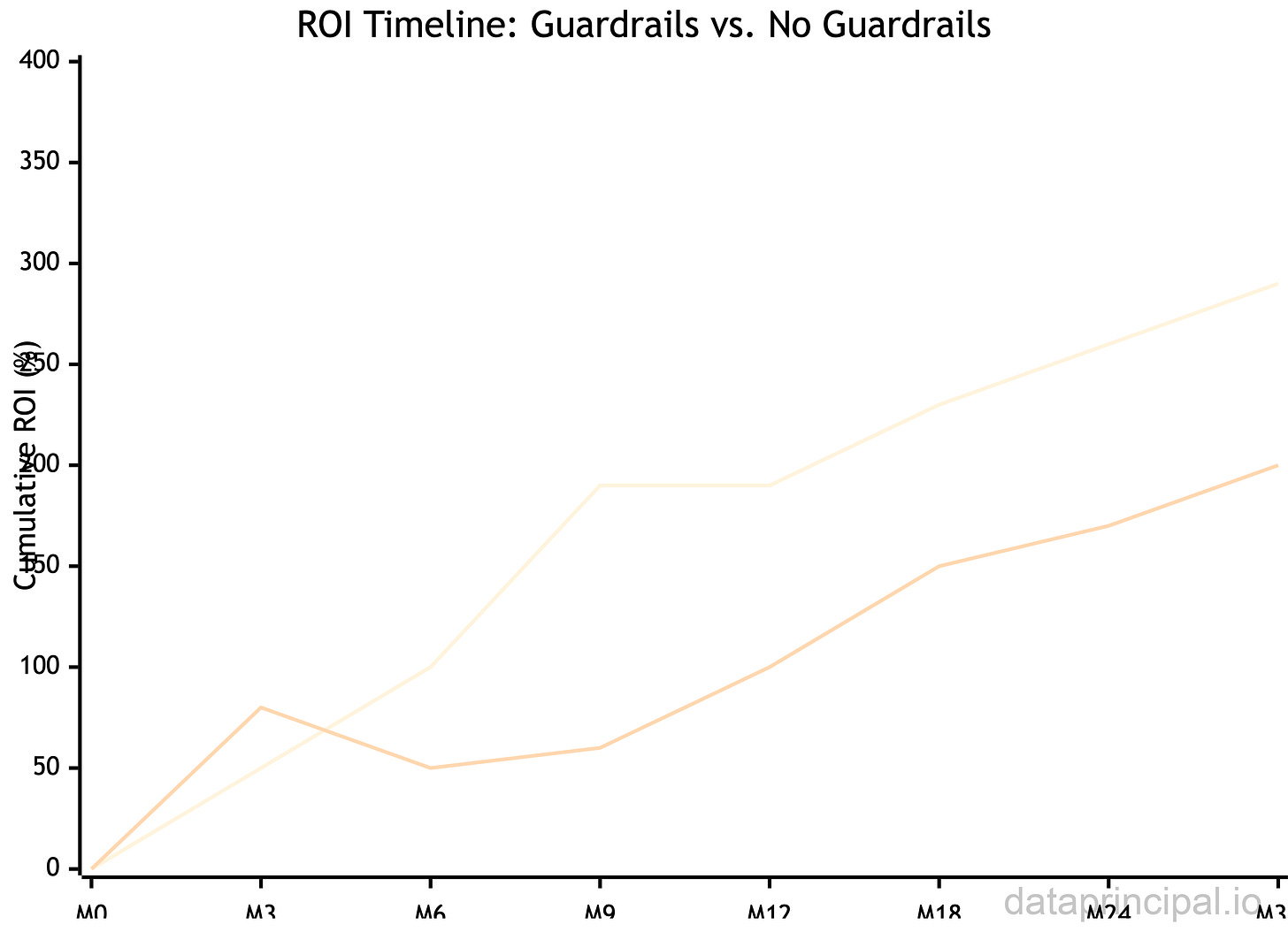

ROI Curve (With vs. Without Guardrails):

Key Insight: Guardrails investment (6-9 month breakeven) delivers 45% higher 3-year ROI (290% vs 200%) compared to rushing adoption without guardrails (18-24 month breakeven with rework costs).

Validation Data Points:

University of Chicago study: 39% more PRs merged after AI agent adoption

GitHub Copilot controlled study: 55% faster task completion (1h 11m vs. 2h 41m)

Deutsche Telekom: development cycles from weeks/months to days

Zendesk: six weeks to three hours (Jorge Luthe, Senior Director of Product)

Enterprise average: 33-36% reduction in development time

Low-code AI platforms: 75% reduction in development time, 65% lower costs compared to traditional

Hidden Costs to Budget For:

Technical debt remediation if guardrails insufficient: 15-25% velocity drag (months 6-12)

Quality regression fixes if gates inadequate: Prevented by upfront investment

What to Ask Your Team

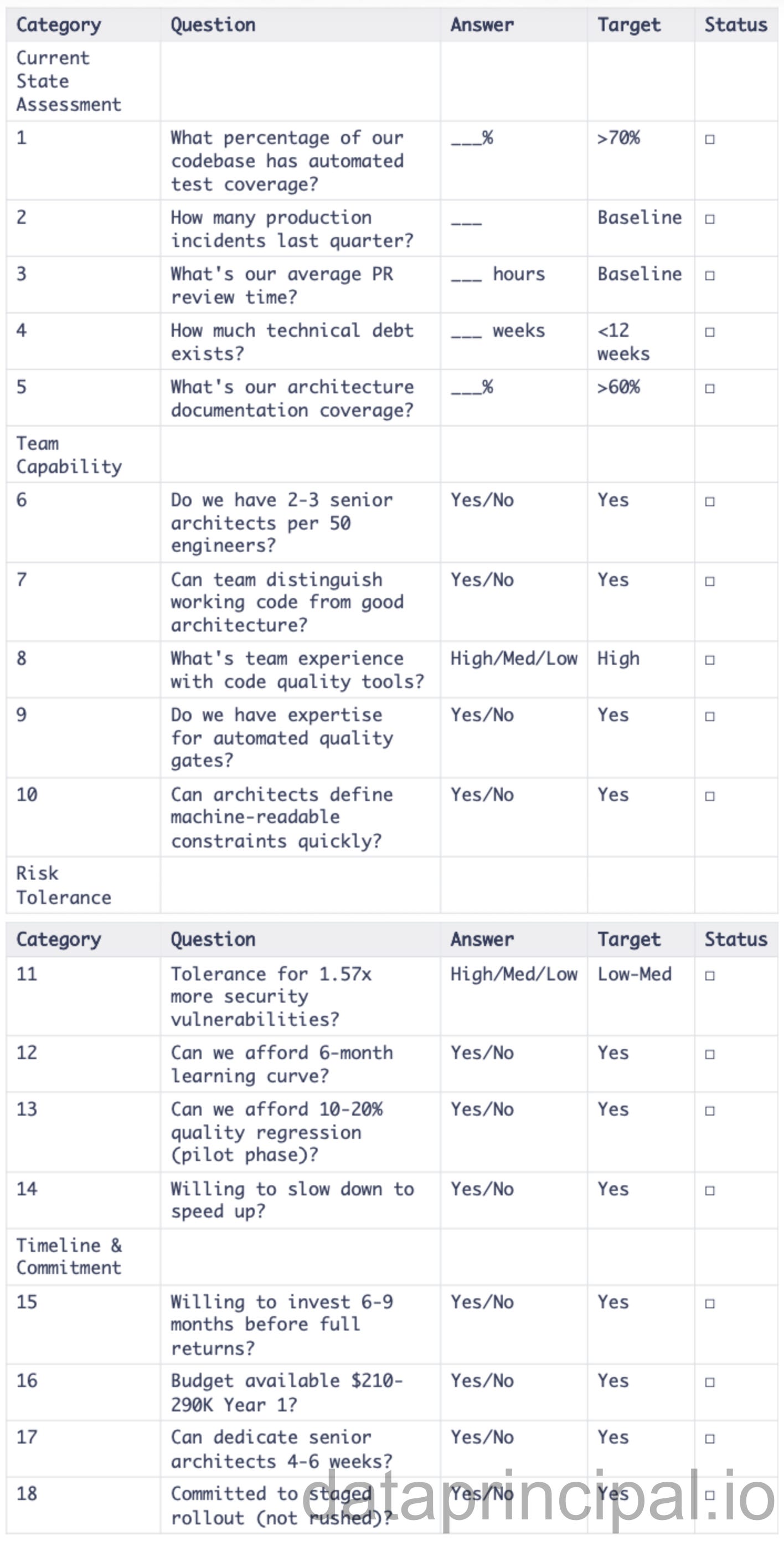

Before committing to AI tool adoption, validate readiness with your engineering leadership using this 18-question framework:

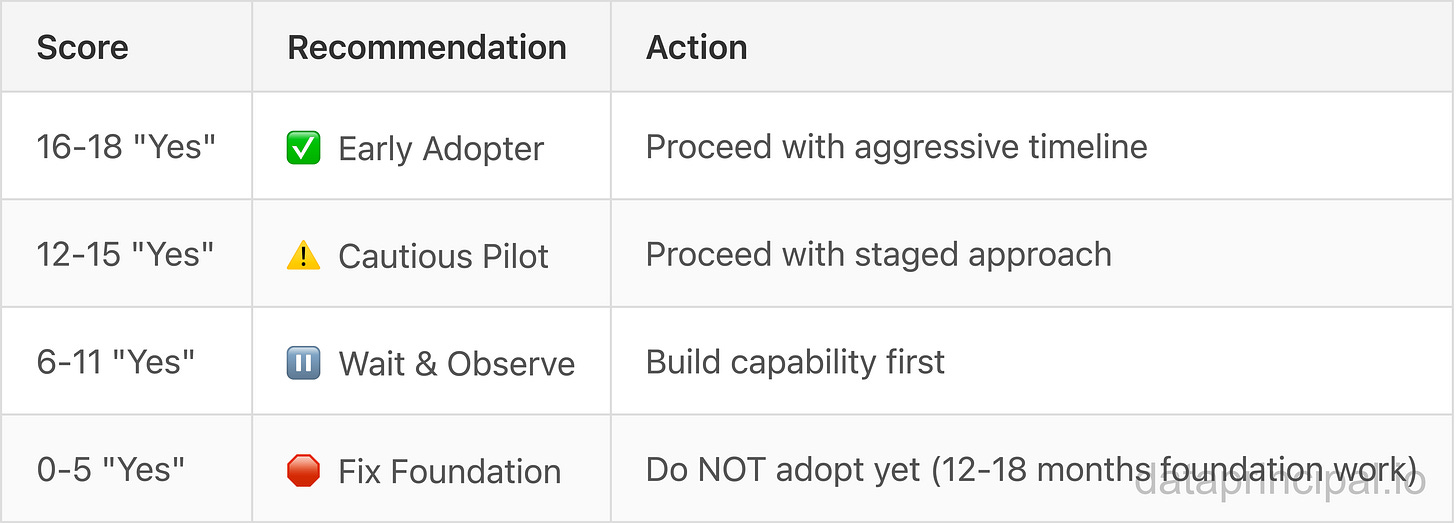

Go/No-Go Scorecard:

Print this checklist and use it in your readiness assessment meeting with engineering leadership.

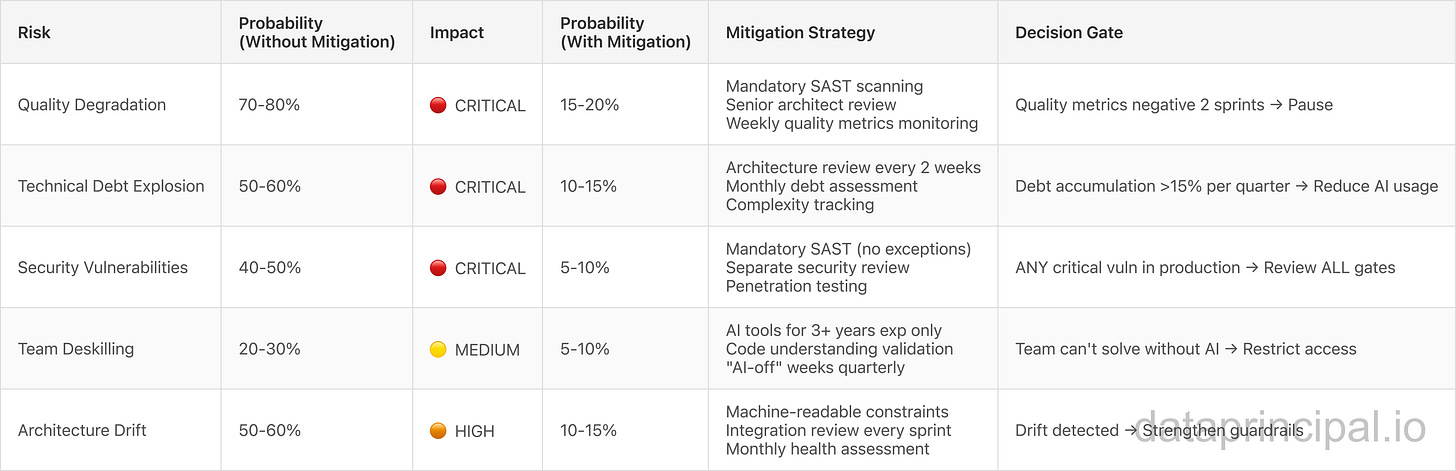

Risk Assessment

Risk Matrix (Likelihood x Impact):

Risk Severity Legend:

🔴 CRITICAL: Production incidents, security breaches, compliance violations

🟠 HIGH: System becomes unmaintainable, performance degradation

🟡 MEDIUM: Team capability degradation, hiring challenges

Key Insight: All 5 risks drop to <20% probability with proper mitigation. Without mitigation, 3 out of 5 risks are >50% likely. Guardrails are NOT optional.

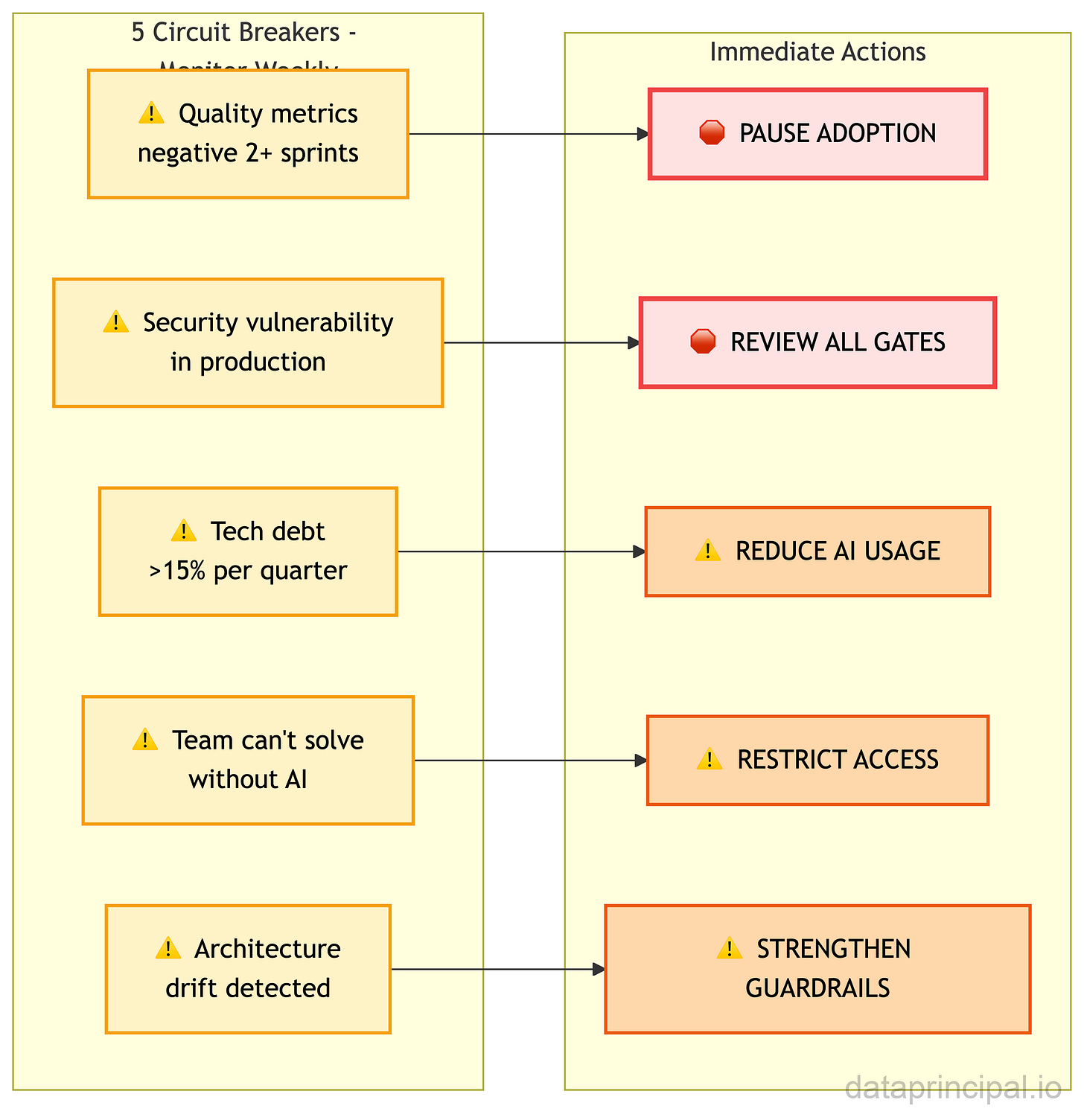

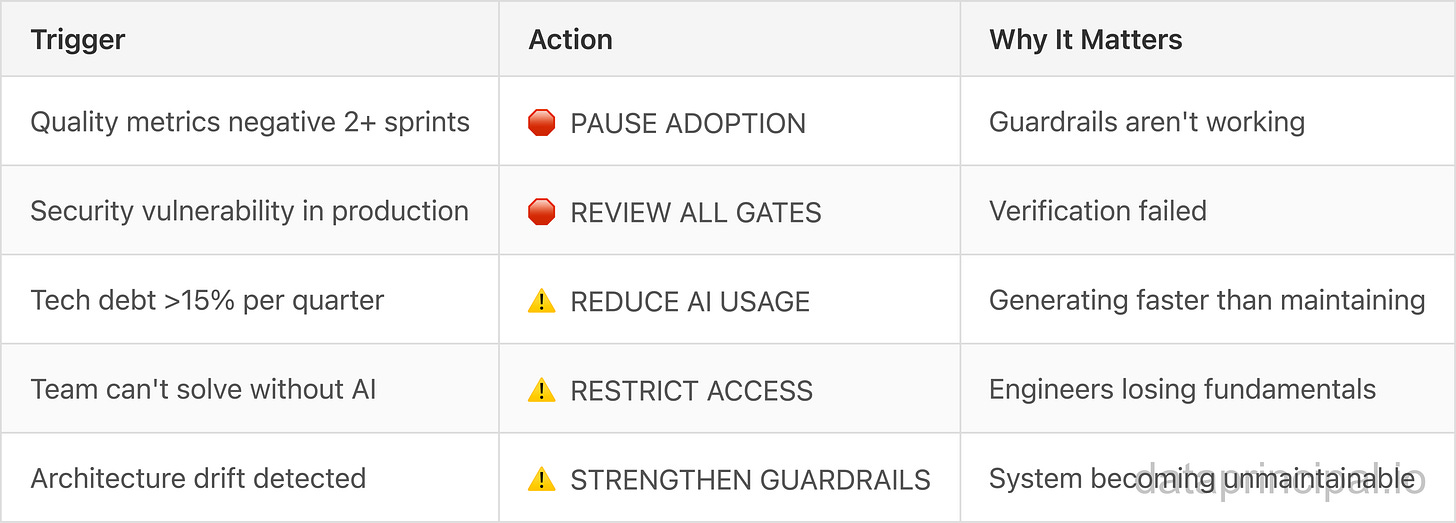

Critical Decision Gates: When to Hit the Brakes

These are your circuit breakers. When any of these conditions appear, immediate action is required—no exceptions, no waiting for next quarter’s review.

What triggers each action:

These aren’t suggestions. These are hard stops that protect your organization from AI-driven technical bankruptcy.

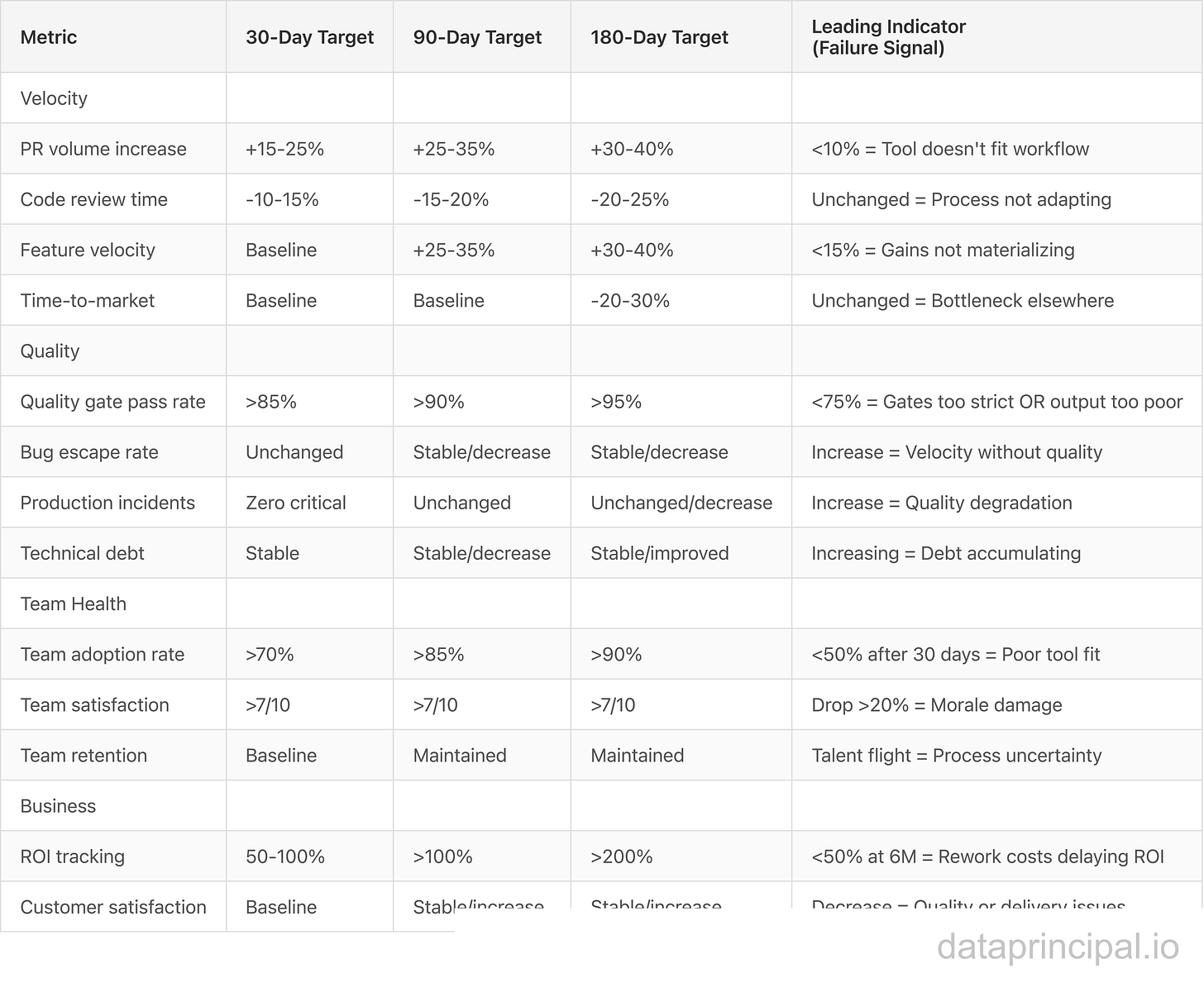

Success Metrics

Metrics Dashboard (Track Weekly):

Measurement Methodology:

Baseline: Establish 90-day historical metrics BEFORE adoption (critical for comparison)

Tracking: Automated dashboards (GitHub, Jira, DataDog, security tools) with real-time visibility

Validation: Monthly architecture health assessment by senior architects (qualitative + quantitative)

Reporting: Executive dashboard with trend lines, thresholds, and decision gates

Red Flags (Leading Indicators That Predict Failure):

PR volume ↑ but bug escape rate ↑ → Velocity without quality

Team adoption <50% after 30 days → Tool doesn’t fit workflow

Quality gate pass rate <75% → Gates too strict OR AI output too poor

Refactoring budget >25% → Debt accumulating faster than expected

Team satisfaction drops >20% → Morale damage from unclear expectations

Next Steps

Use this phased implementation roadmap to execute your adoption strategy. Complete each phase before proceeding to the next. Decision checkpoints determine go/no-go at each stage.

Phase 1: Assessment & Validation (Week 1)

Action Items:

Share “What to Ask Your Team” questions with engineering leadership

Review current architecture maturity and technical debt level

Validate budget availability ($210-290K Year 1)

Assess risk tolerance against Risk Assessment section

Determine adoption readiness quadrant (Early Adopter, Cautious Pilot, Wait & Observe, or Fix Foundation)

Success Criteria (Week 1 End):

Readiness score >12/18 on team assessment questions

Budget approved and allocated

Risk tolerance aligned across leadership

Decision Checkpoint: ✅ Proceed to pilot planning OR ⏸️ Fix Foundation First (12-18 months)

Phase 2: Pilot Planning (Weeks 2-4)

Action Items:

Select 2-3 senior engineers for pilot program (5+ years experience, open to AI tools)

Choose low-risk, non-customer-facing features for initial pilot (internal tools, admin dashboards)

Implement quality gate infrastructure (SAST, linters, security scanning, automated checks)

Define explicit architectural guardrails (integration patterns, security requirements, data model constraints)

Establish success metrics baseline (30-day, 90-day, 180-day metrics from current state)

Schedule weekly pilot reviews (rapid learning cycle)

Success Criteria (Week 4):

Quality gates functional and integrated into CI/CD pipeline

Architectural guardrails documented and communicated

Baseline metrics established for comparison

Decision Checkpoint: ✅ Proceed to pilot execution OR ⏸️ Strengthen gates and guardrails first

Phase 3: Pilot Execution (Weeks 5-12, 6-8 week pilot)

Action Items:

Run controlled pilot with 2-3 selected engineers

Monitor quality, security, and velocity metrics weekly

Adjust approach based on early indicators (strengthen gates if quality slips)

Conduct bi-weekly architecture health assessments (qualitative review of system integrity)

Document learnings and refine processes for broader rollout

Success Criteria (Week 12):

90-day metrics positive (velocity improvement without quality degradation)

Quality maintained or improved (bug escape rate stable or better)

No security regressions (zero incidents from AI-generated code)

Decision Checkpoint: ✅ Proceed to controlled rollout OR ⚠️ Adjust strategy OR 🛑 Pause adoption

Phase 4: Controlled Rollout (Weeks 13-24, Quarter 2)

Action Items:

Expand pilot to 30-50% of team (qualified engineers only: 3+ years experience, demonstrated fundamentals)

Continue weekly metrics monitoring (early detection of issues)

Conduct monthly architecture health assessments (prevent drift)

Strengthen gates if quality slips, refine guardrails if architecture drifts

Prepare for broader rollout if metrics positive (document learnings, refine processes, train additional architects)

Success Criteria (Week 24):

180-day metrics positive (sustained velocity improvement)

ROI trajectory on target (6-9 month breakeven timeline maintained)

Team velocity +30-40% vs. baseline

Technical debt accumulation <15% per quarter

Decision Checkpoint: ✅ Scale to full team OR ⏸️ Continue controlled rollout OR 🛑 Pivot strategy

Phase 5: Scale or Pivot (Week 25+, Quarterly Review)

Action Items:

Comprehensive review of 180-day metrics against success criteria

Strategic ROI validation (financial returns vs. investment)

If successful: Scale to full team with refined guardrails and proven processes

If unsuccessful: Pivot strategy, strengthen foundation, or pause until readiness improves

Long-term adoption decision based on data (not hope or external pressure)

Success Criteria (Quarterly):

Full team adoption ready (metrics consistently positive for 6+ months)

ROI breakeven achieved (6-9 month target)

Sustainable velocity gains without quality degradation

Decision Checkpoint: ✅ Full adoption OR ⚠️ Continued controlled rollout OR 🛑 Strategic pause (data-driven)

Critical Success Factors:

Executive sponsorship: 6-9 month timeline to full ROI (patience required, resist pressure to rush)

Senior architect availability: 4-6 weeks upfront investment for guardrail definition (non-negotiable)

Staged discipline: Follow decision checkpoints strictly (no skipping phases based on optimism)

Data-driven pivots: Willingness to pause if metrics negative (ego-free decision making)

Quality gate commitment: Invest in gates or pay 3x in rework (no shortcuts on quality infrastructure)

Need help assessing your organization’s readiness?

I work with select organizations on architecture assessments and strategic technology adoption. After architecting 14 production platforms and navigating multiple technology transitions, I can help you:

Validate your team’s readiness for AI code adoption using the 18-question framework

Assess architecture maturity and technical debt impact on adoption timeline

Identify specific risks for YOUR context and design mitigation strategies

Build a phased adoption roadmap aligned with your constraints, team capacity, and risk tolerance

Define explicit, machine-readable architectural guardrails specific to your ecosystem

Design quality gates that balance velocity with reliability

This isn’t about selling tools. It’s about making the right decision for YOUR organization at YOUR maturity stage with YOUR constraints.

Interested? Email me: can [at] dataprincipal.io or connect on LinkedIn: https://www.linkedin.com/in/canartuc/

What History Tells Us

Let me close with perspective from two decades of watching these transitions.

Every major technology shift in software has followed the same pattern:

New capability emerges

Skeptics dismiss it as toys

Early adopters prove it works

Investment floods in

The capability becomes table stakes

The industry reorganizes around the new reality

We’re somewhere between steps 3 and 4 right now. The $330M just accelerated our journey toward step 5.

The professionals who thrived in previous transitions (mainframe-to-client-server, client-server-to-web, web-to-cloud, cloud-to-cloud-native) asked the same question: “How do I apply my expertise in the new paradigm?”

The ones who struggled asked: “How do I preserve my role in the old paradigm?”

The 99% just got access to the first 80%.

Our job is the last 20%.

And it just became more valuable than ever.

What’s your take? Is your organization experimenting with AI development tools yet? How are you thinking about evolving architectural practices? Share your experience in the comments.

The framework is complete. The implementation path is clear. But adapting this to YOUR specific context—your team’s capabilities, your existing architecture, your organizational constraints—requires judgment I can’t provide in an article.

If you want help assessing your organization’s readiness, defining guardrails specific to your ecosystem, or building a phased adoption roadmap aligned with your constraints, I work with select organizations on architecture reviews and transition planning.

Email me: can [at] dataprincipal.io or connect on LinkedIn: https://www.linkedin.com/in/canartuc/

Sources

Lovable Funding & Growth

Lovable raises $330M at $6.6B valuation - TechCrunch, December 18, 2025

Google and Nvidia back Lovable - CNBC, December 18, 2025

Lovable surges to $200M ARR in 12 months - Tech Startups, November 18, 2025

Lovable customer case studies: Deutsche Telekom, Zendesk, ERP platform - Tech.eu, December 18, 2025

Cursor Enterprise Adoption

Cursor enterprise customers: Coinbase, Stripe, Optiver - Cursor Official Website, 2025

Cursor adoption trends and real enterprise data - Opsera, 2025

How Cursor hit $100M ARR in 12 months - WeAreFounders, 2025

AI Code Generation Research & Impact

AI code generation statistics and enterprise results - Netcorp Software Development, 2025

METR Study: AI makes experienced developers 19% slower - METR, July 2025

State of AI code quality in 2025 - Qodo, 2025

ROI of AI code generation: metrics and time saved - Zencoder, 2025

Software Architecture & AI

Impact of AI on Software Architecture - ArXiv, 2025

How AI is redefining the role of a software architect - IcePanel, July 2025

Architecture and Design InfoQ Trends Report 2025 - InfoQ, 2025

Low-Code AI Market

AI and Low-Code/No-Code Tools in 2025 - BrilWorks, 2025

Best low-code AI platforms 2025 with production results - Appsmith, 2025

AI Code Quality & Security Issues

CodeRabbit: AI-generated code produces 1.7x more issues than human code - Yahoo Finance, December 2025

AI-authored code needs more attention, contains worse bugs - The Register, December 17, 2025

45% of AI-generated code contains security vulnerabilities - TechRadar, 2025

GitHub Copilot: 40% of code found vulnerable in academic study - Communications of the ACM

Why AI-generated code is creating a technical debt nightmare - Okoone, 2025

The vibe coding delusion: Startups paying the price for AI technical debt - Tech Startups, December 11, 2025

The hidden costs of coding with generative AI - MIT Sloan Management Review

The AI developer crisis of 2025: Hidden failures breaking codebases - Medium, 2025