€2.1 Million in Dead Stock Started with a Slide Deck

The AI strategy was approved in six weeks. Nobody talked to the data team. The model shipped on ghost records and duplicate customers. This is how it went.

Marcus Wendt found out about Renhardt Logistics’ AI strategy the same way the warehouse team did. A company-wide email on a Monday morning. Subject line: “Our AI-Powered Future: Intelligent Demand Forecasting by Q3.”

He read it twice. Then he opened the data catalog and stared at it for a long time.

Marcus had been the senior data engineer at Renhardt for three years. He knew what was in the warehouse. He knew what wasn’t. And he knew that the thing they had just promised to the board and to a press release already in draft did not have a foundation to stand on.

All names, companies, and events in this story are fictional. Any resemblance to real organizations, products, events, or related entities is coincidental. Any resemblance to your last three jobs is structural.

What the strategy assumed

The slide deck was polished. Forty-two pages. It proposed an AI-driven demand forecasting model that would reduce overstock by 30% and cut logistics waste across their European distribution network.

The deck referenced “our rich data assets” on slide nine.

Marcus knew those assets. Three source systems fed the warehouse: the ERP (SAP, migrated eighteen months ago, still producing ghost records from the old instance), the CRM (Salesforce, maintained by a sales ops team that redefined “customer” twice in 2024), and a logistics tracking platform that logged shipment events in local time zones with no UTC normalization.

The word “customer” meant something different in each system. It had meant something different for four years. There was a Jira ticket about it from 2022. It was still open.

Who was in the room

The AI strategy had been built over six weeks. Marcus later learned who was involved.

Diane Koller, the Chief Digital Officer, had seen a demand forecasting demo at a logistics conference in Amsterdam. She brought the idea to the executive team. The CEO liked it. They brought in Aldwyn Partners, a consulting firm that specialized in “AI transformation roadmaps.” Aldwyn assigned a team of four. None of them asked to see the data catalog. None of them talked to Marcus or anyone on his team.

An external vendor, Praedion, was brought in to provide the ML platform. Their sales engineer did a two-hour demo with synthetic data. It looked impressive. The procurement process started the same week.

The strategy was approved in an executive offsite. The timeline was set. The press release was queued.

Marcus was not invited to any of these meetings. Neither was his manager.

“Support the integration.”

By Tuesday, Marcus had a Confluence page with three sections. The first listed every definition conflict across the three source systems. The second mapped the data quality gaps that would directly impact model accuracy. The third estimated what it would take to fix them: five to seven months of foundational work before any model could be trained on reliable inputs.

He sent it to his manager, Priya Nair, Director of Data Engineering. Priya read it, confirmed his findings, and forwarded it to Diane Koller’s office.

Nothing happened for two weeks.

Then Marcus got a calendar invite. “AI Forecasting: Data Readiness Check-In.” Thirty minutes. Diane, the Aldwyn lead, the Praedion account manager, Priya, and Marcus.

The meeting was uncomfortable. The Aldwyn lead asked if the data issues could be “worked around.” The Praedion account manager suggested their platform had “built-in data harmonization.” Marcus asked which definition of “customer” the harmonization would choose. The room went quiet for more than four seconds...

Diane said Q3 was not moving. The board had approved the budget. The press release was out. Aldwyn would handle the data mapping as part of their workstream. Marcus and his team should “support the integration.”

Priya tried to push back. She asked for a written scope of what “support” meant and what quality bar Aldwyn would meet on the data layer. Diane said they would “figure it out as they go.”

After the meeting, Marcus asked Priya what they should do. Priya said, “Document everything. Send it in writing. And then do what they tell us.”

What Aldwyn built

Aldwyn’s statement of work covered the AI roadmap and data mapping. Data quality validation was not in scope. Nobody purchased it.

Their data mapping took three weeks. They picked the CRM definition of “customer” because it had the most records. They did not check whether those records were deduplicated. They were not. The same customer appeared two or three times, each time with a different regional code, depending on which sales rep entered them.

For the timestamp problem, they applied a flat UTC+1 offset across all shipment events. Renhardt operated in four time zones. Nobody on the Aldwyn team asked which ones.

The Praedion platform ingested everything without complaint. The model was trained. The first forecasts came out in July, two weeks ahead of the Q3 deadline. Diane sent a company-wide update with a chart. The numbers looked good.

Marcus read the forecast for the Hamburg distribution center. It predicted a 40% spike in demand for a product category that had been discontinued six months earlier. The model had learned from ghost records in the ERP that were never cleaned up after the SAP migration.

He flagged it. Aldwyn’s project lead called it “an edge case” and said the model would “self-correct with more data.” Marcus escalated to Priya. Priya escalated to Diane. Diane said proceed. The consultant gave a bad technical opinion but the authority to stop belonged to Diane, and she did not use it.

Ghost demand goes live

The model went live in September. For six weeks, the forecasts looked reasonable enough that nobody questioned them. Procurement teams across four distribution centers began adjusting orders based on the model’s output.

By November, the Hamburg center had excess stock worth €2.1 million in a category driven almost entirely by duplicate customer records and ghost demand signals. The Dortmund center had underordered cold-chain goods because the timestamp offset had shifted seasonal patterns by three weeks.

Marcus’s team found the root causes in two days. They had been obvious from the start. They were on the Confluence page he wrote in March.

Where Aldwyn was by then

Their contract ended in August. On schedule. They delivered what they were hired to deliver. The final report was titled “AI Demand Forecasting: Implementation Summary and Recommendations for Scale.” Forty-one pages. It referenced their “successful deployment” and recommended “Phase 2 expansion” to the remaining seven distribution centers. The problem was not that they left. The problem was that nobody purchased what came next.

Praedion’s contract was still active. Their support team suggested retraining the model with “more recent data.” The data was still wrong. Retraining on wrong data does not produce the right answers. It produces different wrong answers with higher confidence scores.

Eleven months later

Marcus and his team spent the next three quarters doing what he had proposed in March: reconciling customer definitions, fixing the timestamp normalization, removing ghost records, and building quality checks that should have existed before any model was trained.

The forecasting project restarted the following February. The model was retrained on clean data. It reduced overstock by 14%, not the 30% from the slide deck. The number was real, and the procurement teams trusted it.

Total elapsed time from announcement to production-ready system: eighteen months. Marcus’s original estimate for the foundation work: five to seven months. The difference was not just time. It was €2.1 million in dead stock, two quarters of procurement decisions based on ghost data, an Aldwyn invoice that had never checked again, and three engineers on Marcus’s team who updated their LinkedIn profiles in October.

No retrospective

Nobody went back and read Marcus’s Confluence page from March. There was no retrospective. No one acknowledged that the data issues he raised before a single line of model code was written were the same issues that took down the system eight months later.

Diane presented the “relaunched” forecasting system to the board as a success. She called it “iterative development.”

In the hallway after the board meeting, Priya told Marcus she appreciated what he had done. She also told him to stop writing risk assessments unless she asked him to. “You’re right about all of it,” she said. “But being right in an email is not the same as being safe.”

Marcus did not leave Renhardt. But he stopped raising issues in meetings. He started keeping a personal log, timestamped and offline, for the day when someone might ask what went wrong. He knew from experience that the question usually came twelve months too late, and the people asking it were never the ones who had been in the room.

The slide deck does not break when deployed. The data does.

Does that sound similar to you? Maybe the same for another engineer, too, but s/he will not learn if you don’t share!

Data platforms that survive growth, stress, and reality. - Can Artuc - can [at] dataprincipal.io

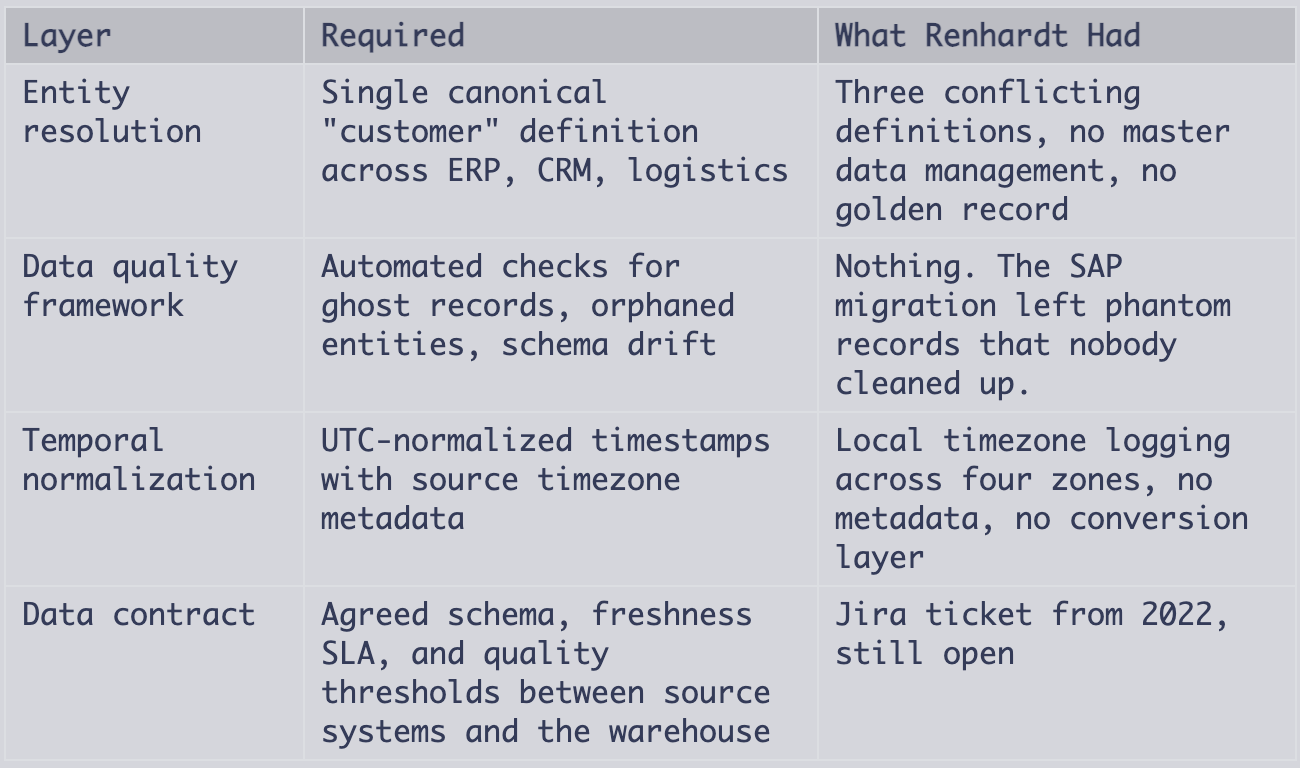

Below: the four layers Renhardt needed before any model touched the warehouse, and the one question that would have caught it in the first meeting.

The following section will be paywalled soon. This is a sample for premium subscribers to view. The paid section is the kind of review that costs $300/hr in consulting.

The Pattern Behind the Story

Pattern: The Readiness Assumption

An executive sees a demo. A consulting firm builds a roadmap without talking to the team that owns the data. The timeline is set before anyone checks whether the inputs exist, match, or mean what the slide deck says they mean.

The buyer walks into this pattern carrying three assumptions. All three are wrong:

“The data is ready.” The buyer assumes this without checking. Three source systems with different definitions of “customer” are not an edge case. It is the default state of every company that grew through acquisitions, CRM changes, or ERP migrations. If nobody has reconciled entity definitions across systems, the data warehouse is a collection of opinions, not facts. The consulting firm maps what exists. The buyer never verified what exists.

“The platform handles it.” The buyer accepts this from the vendor demo without verifying it against their own data. ML platforms ingest data. They do not validate it (if it is not explicitly configured to do so). “Built-in data harmonization” means the platform picks one schema and maps everything to it. It does not know that your CRM redefined “customer” twice, or that your ERP still produces records from a decommissioned instance. The platform does exactly what you tell it. If you tell it to train on garbage, it trains on garbage.

“The team will support it.” The buyer defines scope this way. “Support the integration” is a directive without a definition. It means: do what the consultants need, on their timeline, with no authority to block or redirect. The data team becomes a service desk for a project they were not consulted on and cannot quality-check. The buyer set those terms, not the consultant.

Red flags in your org:

An AI/ML initiative is announced before the data team sees the proposal

External consultants are scoped without a data readiness workstream

Your team is asked to “support” without a written scope or quality bar

The vendor demo used synthetic data, not your actual data

The timeline is set by a press release or board commitment, not by a technical assessment

Your move when you’re Marcus:

Document everything. Priya was right about that. But send it to your manager in a format that can be forwarded without editing: numbered risks, estimated impact, and a clear ask. “Five to seven months of foundation work” is accurate but easy to dismiss. “Three unresolved entity conflicts that will cause the model to double-count 23% of customer records” is harder to ignore.

If your manager forwards it and nothing happens, you have done your job. Do not escalate over your manager’s head. Do not bring it up again in a meeting where the decision has already been made. The Confluence page is your protection, not your weapon.

And if three engineers on your team update their LinkedIn profiles in October, take the meeting with the recruiter. You now have a story about what you tried to prevent and what it cost. That story is worth more in your next interview than another year of cleaning up after consultants who left in August.

The structural problem

Organizations purchase AI deployments without purchasing the data readiness that makes them work. Diane’s team bought a roadmap, a platform integration, and a go-live date. Nobody bought entity resolution. Nobody bought data quality validation. Nobody bought timestamp normalization. The statement of work never included a data readiness workstream because nobody wrote one in.

Aldwyn delivered what was in the contract. Their forty-one-page summary referenced a “successful deployment” because deployment was the deliverable. Accuracy was never purchased. You cannot hold a contractor accountable for a foundation that was never in the blueprint.

Until buyers scope data readiness as a prerequisite, not an afterthought, this pattern will repeat. It will repeat at your company. The only variable is the amount in euros.

Principal Data Architect Summary

If this project landed on my desk for review before go-live, I would have killed it in the data readiness check-in meeting. The idea was fine. Demand forecasting with ML is a solved problem in logistics. The architecture underneath it was not.

What was missing before any model work started:

Marcus’s five-month estimate covered the bare minimum:

Master data management for customer entity (reconcile three definitions into one, deduplicate, assign canonical IDs)

Ghost record cleanup in ERP (identify and soft-delete records from the pre-migration SAP instance)

Timestamp normalization pipeline (convert all shipment events to UTC, store original timezone as metadata)

Data quality checks at ingestion (reject or quarantine records that fail entity resolution or temporal validation)

Data contract between source systems and the ML training pipeline (schema version, freshness window, minimum quality score)

Without these five, any model trained on this warehouse is learning from noise. The 40% demand spike for a discontinued product was the model working exactly as designed, on data that should never have reached it.

The architectural mistake was treating the ML platform as the starting point instead of the data platform. Praedion’s platform did nothing wrong. It ingested what it was given and trained as it was told to. The platform is the last layer, not the first. Aldwyn started at the top of the stack and assumed everything below it was solid. Marcus knew it was not. The four-second silence in that meeting was the sound of a €2.1 million lesson being approved.

But the architectural and organizational problems were the same. Diane’s team treated Marcus’s data engineering group as a service desk (”support the integration”) when the project required close collaboration: joint problem-solving on entity definitions, shared ownership of data quality, and feedback loops between the data and model layers. There was no enabling team, no platform team feedback loop, no collaboration mode. The org structure made the gap inevitable. Conway’s Law did what it always does: the system Renhardt built mirrored the communication paths Diane chose, which excluded the one team that understood the data.

If you are reviewing a similar proposal in your org:

Ask one question in the first meeting: “Show me the data contract between the source systems and the training pipeline.” If the answer references a slide deck instead of a schema registry, you are looking at the same pattern that cost Renhardt €2.1 million.